Start Here: Learning Paths

If you are new to this site, the archive can feel large. This page groups the main books, articles, courses, and reference pages into a few learning paths so you can pick the route that matches what you want to understand next.

Build LLMs from scratch

Architecture, tokenization, training, and PyTorch implementation.

Build LLMs from scratch

Architecture, tokenization, training, and PyTorch implementation.

Reasoning models and advanced training

Reinforcement learning, inference-time scaling, distillation, and evaluation.

Reasoning models and advanced training

Reinforcement learning, inference-time scaling, distillation, and evaluation.

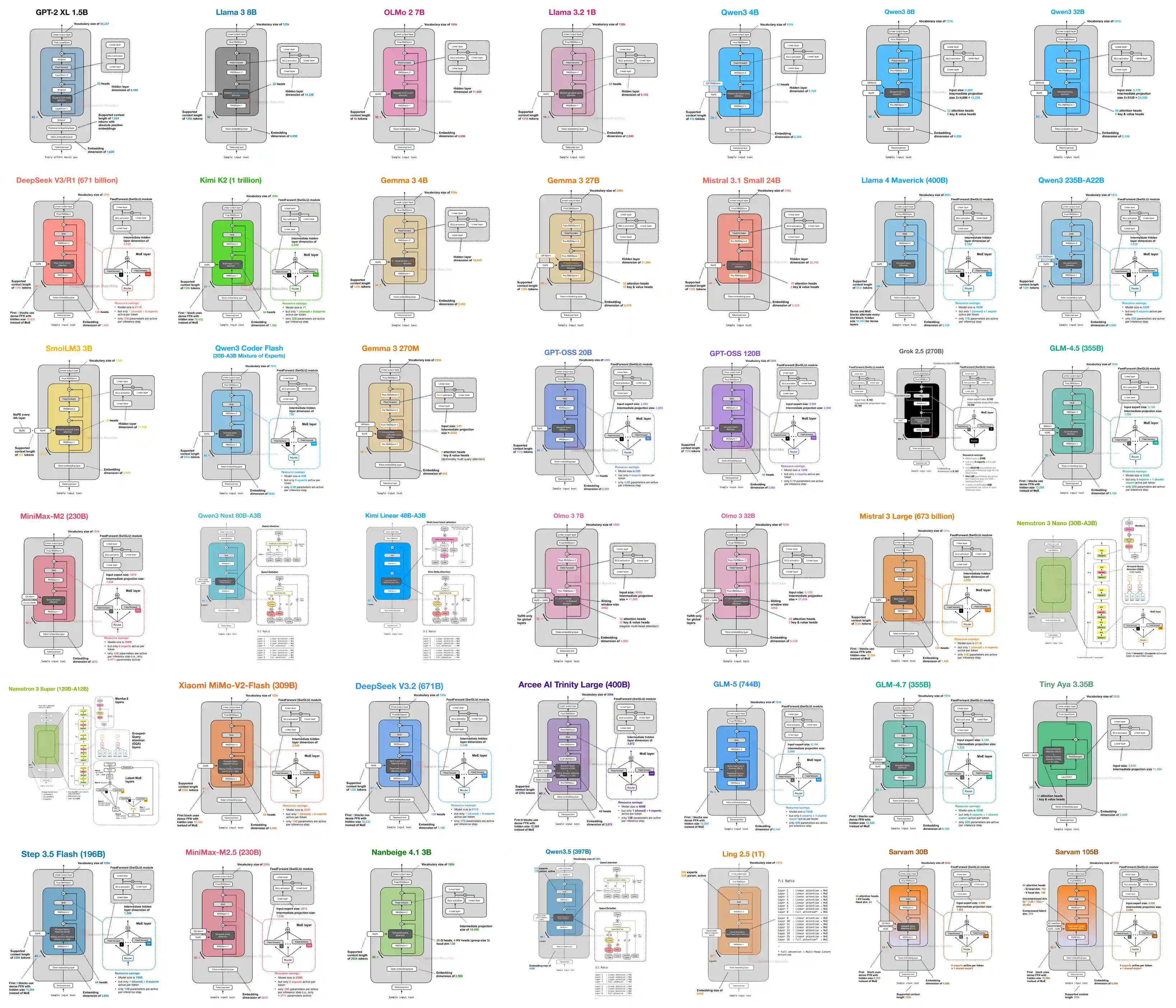

Compare LLM architectures

Attention variants, MoE, KV cache, and modern model families.

Compare LLM architectures

Attention variants, MoE, KV cache, and modern model families.

Learn practical ML and PyTorch

Applied machine learning, deep learning, and course material.

Learn practical ML and PyTorch

Applied machine learning, deep learning, and course material.

Learning Paths

Best if you want the bottom-up route: tokenization, attention, GPT-style models, pretraining, and finetuning.

- Start with Build a Large Language Model (From Scratch) for the book and course links.

- Use the LLMs-from-scratch code repository as the main implementation companion.

- Read the BPE tokenizer article before diving into tokenization details.

- Work through self-attention from scratch to build the core mental model.

- Add the KV cache article once you start thinking about inference efficiency.

Best if you already know the LLM basics and want to understand reinforcement learning for LLMs, inference-time scaling, distillation, and other post-training methods behind modern reasoning models.

- Read Understanding Reasoning LLMs for the high-level map of reasoning methods.

- Read the inference-time scaling overview for test-time compute methods.

- Read the RL for reasoning article for GRPO, reinforcement learning, and advanced post-training ideas.

- Start with Build a Reasoning Model (From Scratch) for the book and repository links.

- Use the reasoning-from-scratch repository as the main implementation companion.

Best if you want to understand how today's open-weight models differ in attention, MoE, normalization, and inference tradeoffs.

- Browse the LLM Architecture Gallery for a visual overview of model families.

- Read The Big LLM Architecture Comparison for the main architecture narrative.

- Read the visual guide to attention variants for MHA, GQA, MLA, sparse attention, and hybrids.

- Read the workflow article to see how to inspect new open-weight releases.

- Read the gallery announcement for context on how the reference collection is organized.

Best if you want a broader applied machine learning route before or alongside modern LLM material.

- Use Machine Learning with PyTorch and Scikit-Learn for a full applied path.

- Try PyTorch in One Hour for a compact refresher.

- Study the university deep learning course for a longer lecture series and university material.

- Use the deep learning resources page for older but still useful model notebooks and references.

- Read Machine Learning Q and AI for concise explanations of common modern ML and AI concepts.