Machine Learning FAQ

Why can a pretrained model answer some questions but still be bad at following instructions?

A pretrained model can answer some questions because pretraining already teaches it a lot about language, facts, and common text patterns. But it can still be bad at following instructions because pretraining is not the same thing as instruction tuning.

During pretraining, the model learns next-token prediction on raw text. That teaches useful general capabilities:

- factual associations

- syntax and style

- common question-answer patterns

- broad world knowledge encoded in the data

So if you ask a direct question, the model may often produce a reasonable answer simply because similar continuations appeared in its training data.

However, that does not mean it has been trained to:

- obey the user’s requested format

- stay concise when asked

- follow multi-part instructions reliably

- refuse or hedge appropriately when needed

This is why base models can feel inconsistent. Sometimes they complete a prompt beautifully. Sometimes they ignore part of the request, continue in an odd style, or answer in a way that looks more like generic continuation than cooperative assistance.

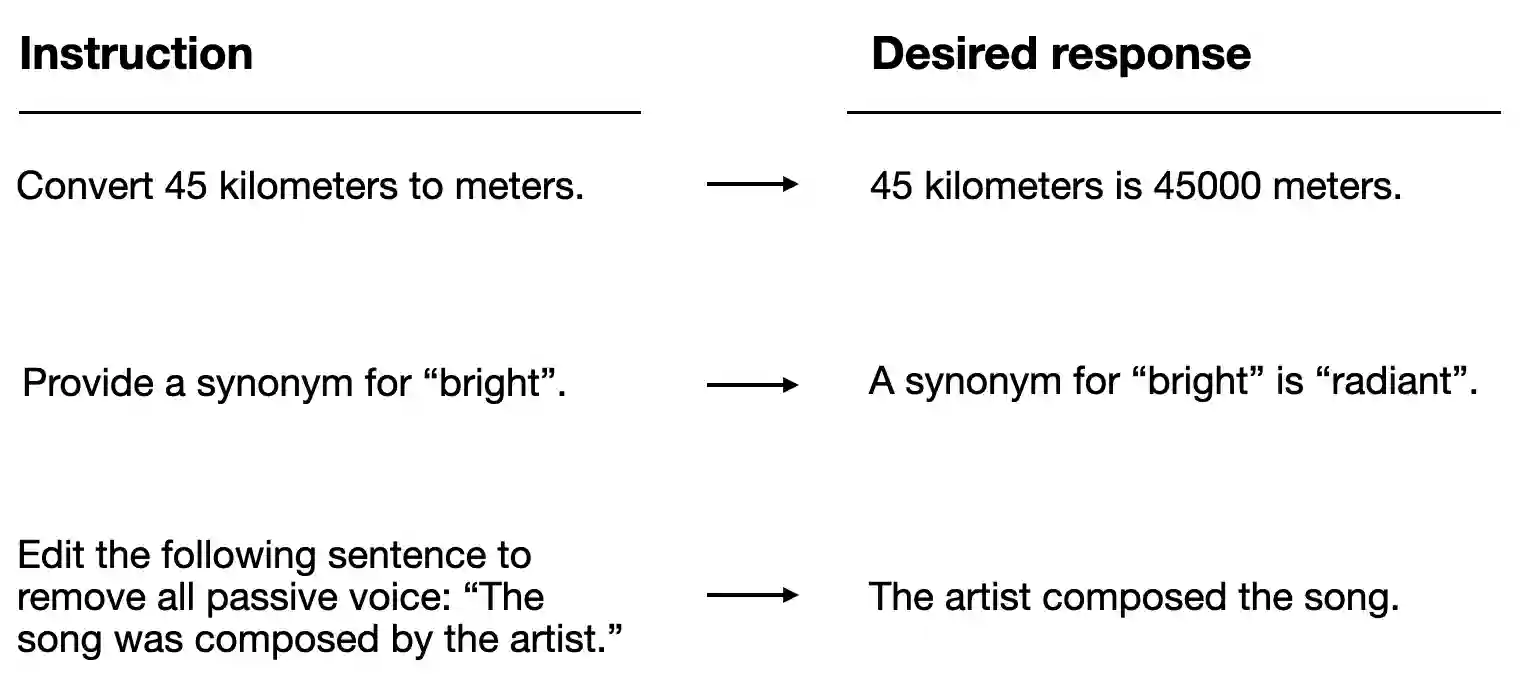

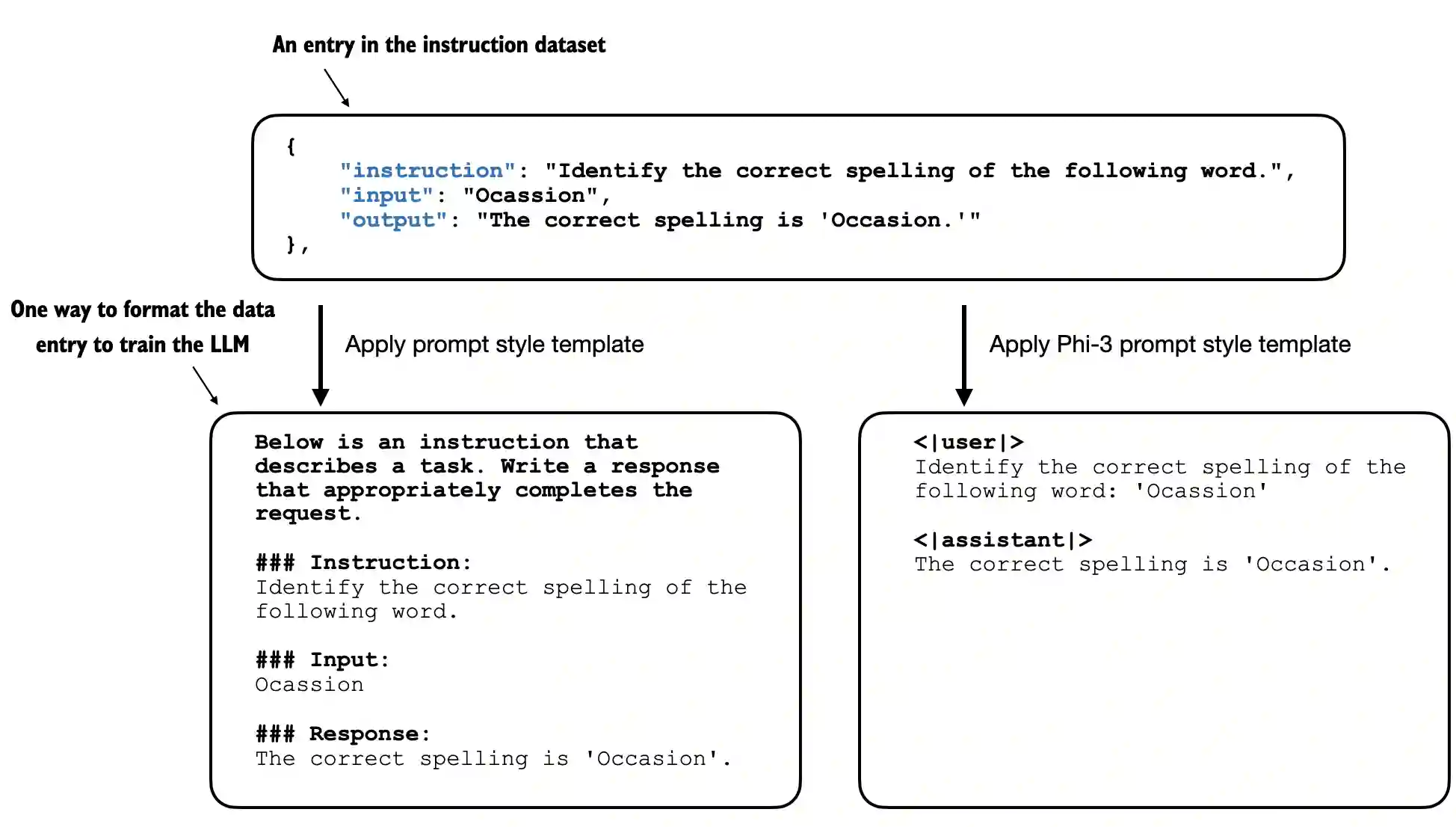

Instruction finetuning helps because it trains the model on examples where the desired behavior is explicit: here is the instruction, here is the preferred response.

So the key distinction is:

- pretraining gives the model broad capability

- instruction tuning teaches the model how to use that capability in the way users want

In short, a pretrained model can answer some questions because it learned language and factual patterns during next-token prediction, but it can still be bad at following instructions because it was not explicitly trained to treat user prompts as requests that must be satisfied in a particular format or style.