Machine Learning FAQ

What is perplexity, and what does it actually tell us about an LLM?

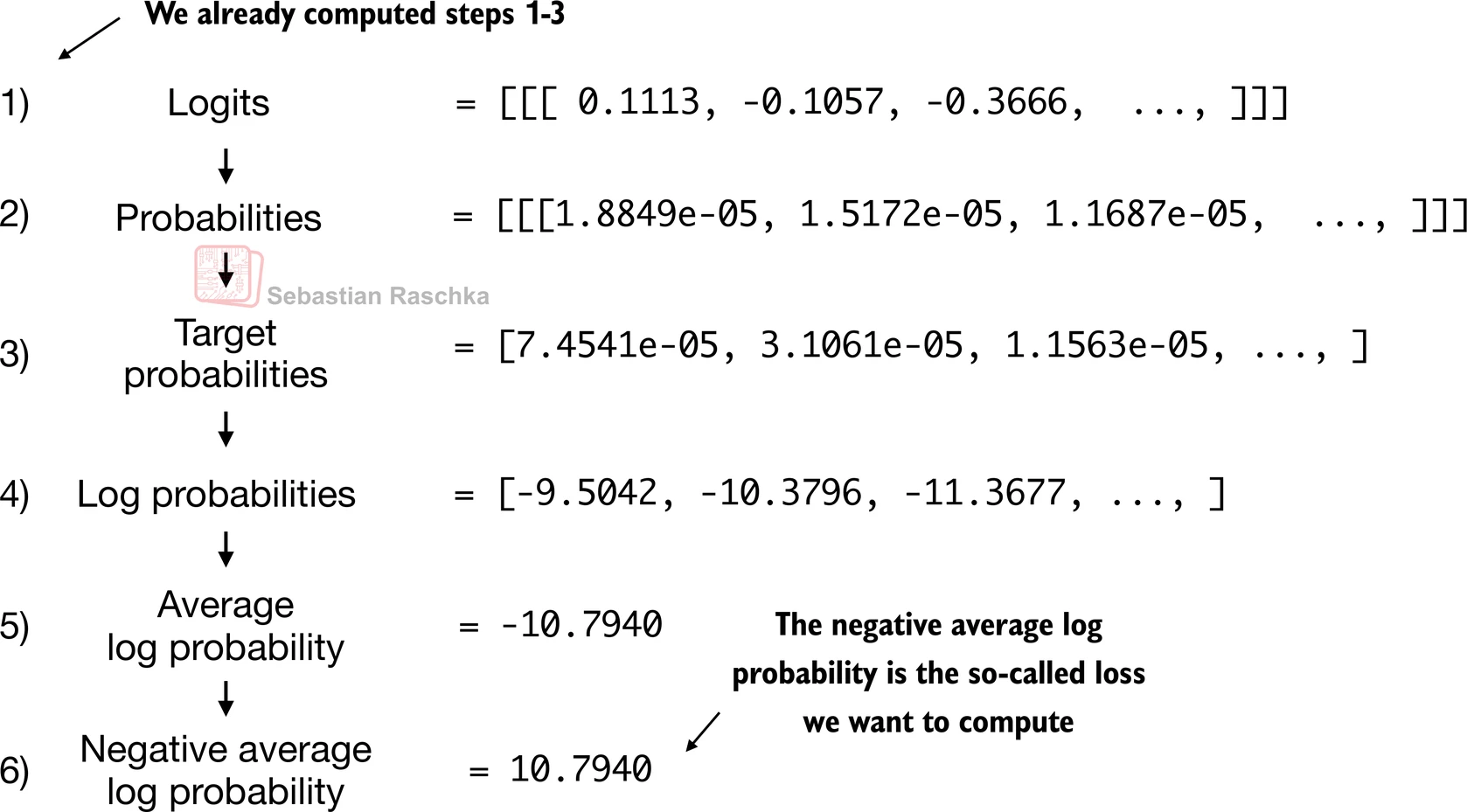

Perplexity is a standard language-modeling metric derived from cross-entropy loss. It tells you how well an LLM predicts the next token on a dataset.

The simplest way to think about it is:

- cross-entropy measures how much probability the model assigns to the correct next tokens

- perplexity is just the exponential form of that average loss

So lower perplexity means the model is less “surprised” by the dataset and predicts its next tokens better.

That makes perplexity useful for questions such as:

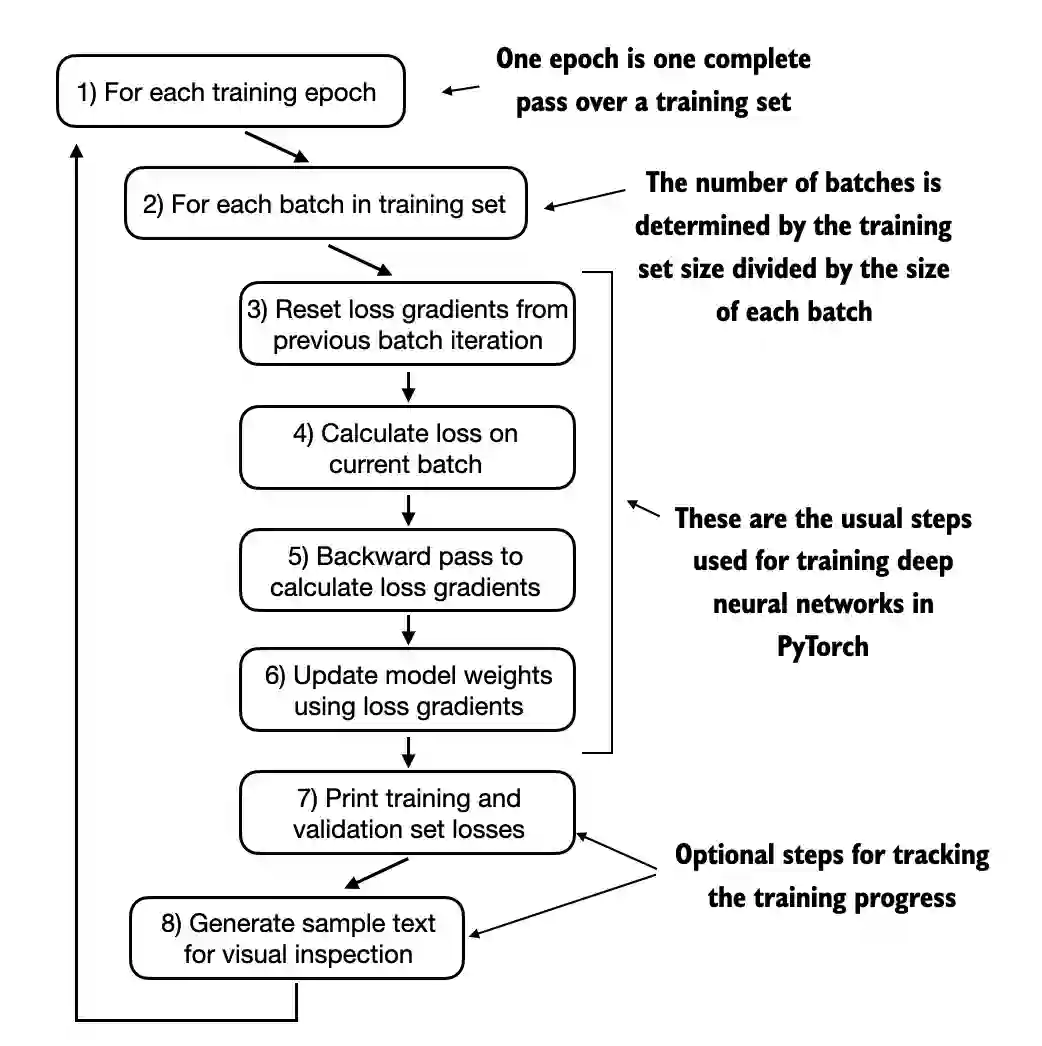

- Is model A better than model B on the same validation set?

- Is training still improving?

- Did a new architecture or tokenizer help next-token prediction?

However, perplexity has important limits.

It is only meaningful when the comparison is fair. In practice, that means the models should be evaluated on the same dataset and ideally with the same tokenization setup. A perplexity value from one tokenizer or corpus is not directly comparable to a value from a different setup.

It also does not directly tell you whether a model is:

- helpful

- aligned

- safe

- good at following instructions

- pleasant to interact with

Those are downstream behavioral qualities, not pure next-token prediction qualities.

A useful interpretation is that perplexity is a language-modeling competence metric, not a full product-quality metric. It tells you how well the model fits a text distribution, which matters a lot, but it is not the whole story once you care about chat behavior, preference alignment, or task usefulness.

In short, perplexity is the exponential form of average cross-entropy loss, and it tells you how well an LLM predicts the next token on a specific dataset, not whether it is broadly helpful or aligned for real-world use.