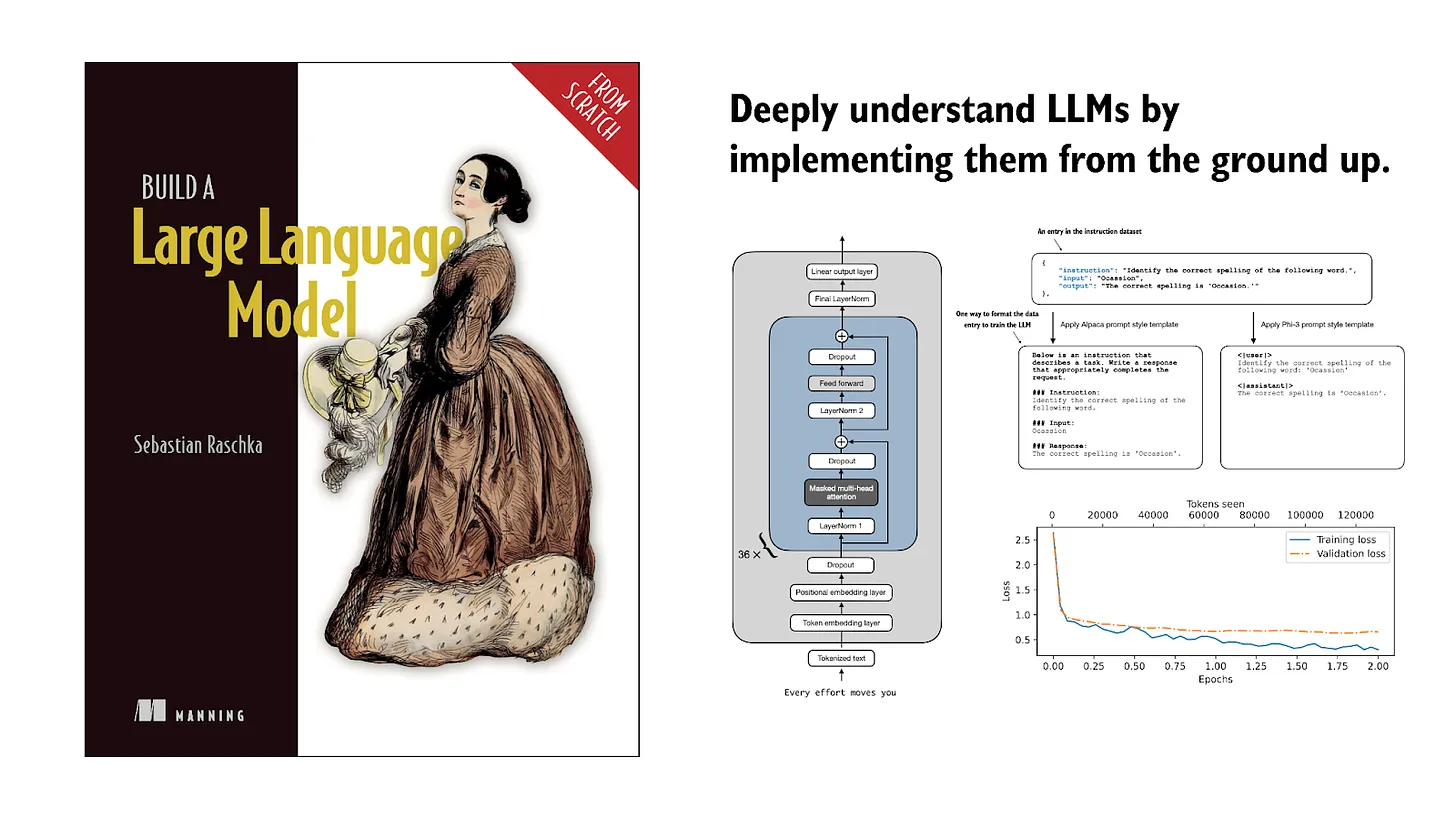

Building LLMs from the Ground Up: A 3-hour Coding Workshop

If you’d like to spend a few hours this weekend to dive into Large Language Models (LLMs) and understand how they work, I’ve prepared a 3-hour coding workshop presentation on implementing, training, and using LLMs.

Below, you’ll find a table of contents to get an idea of what this video covers (the video itself has clickable chapter marks, allowing you to jump directly to topics of interest):

0:00 – Workshop overview

2:17 – Part 1: Intro to LLMs

9:14 – Workshop materials

10:48 – Part 2: Understanding LLM input data

23:25 – A simple tokenizer class

41:03 – Part 3: Coding an LLM architecture

45:01 – GPT-2 and Llama 2

1:07:11 – Part 4: Pretraining

1:29:37 – Part 5.1: Loading pretrained weights

1:45:12 – Part 5.2: Pretrained weights via LitGPT

1:53:09 – Part 6.1: Instruction finetuning

2:08:21 – Part 6.2: Instruction finetuning via LitGPT

02:26:45 – Part 6.3: Benchmark evaluation

02:36:55 – Part 6.4: Evaluating conversational performance

02:42:40 – Conclusion

It’s a slight departure from my usual text-based content, but the last time I did this a few months ago, it was so well-received that I thought it might be nice to do another one!

Happy viewing!

References

If you read the book and have a few minutes to spare, I'd really appreciate a brief review. It helps us authors a lot!

Your support means a great deal! Thank you!