Best Articles by Topic

A curated, non-chronological guide to the articles I would recommend first. If the blog archive is the full library, this page is the shelf of strongest starting points by topic.

Start here if you want to compare modern open-weight models, attention variants, mixture-of-experts designs, and practical architecture tradeoffs.

The Big LLM Architecture Comparison

The main long-form comparison of recent LLM architecture choices.

The Big LLM Architecture Comparison

The main long-form comparison of recent LLM architecture choices.

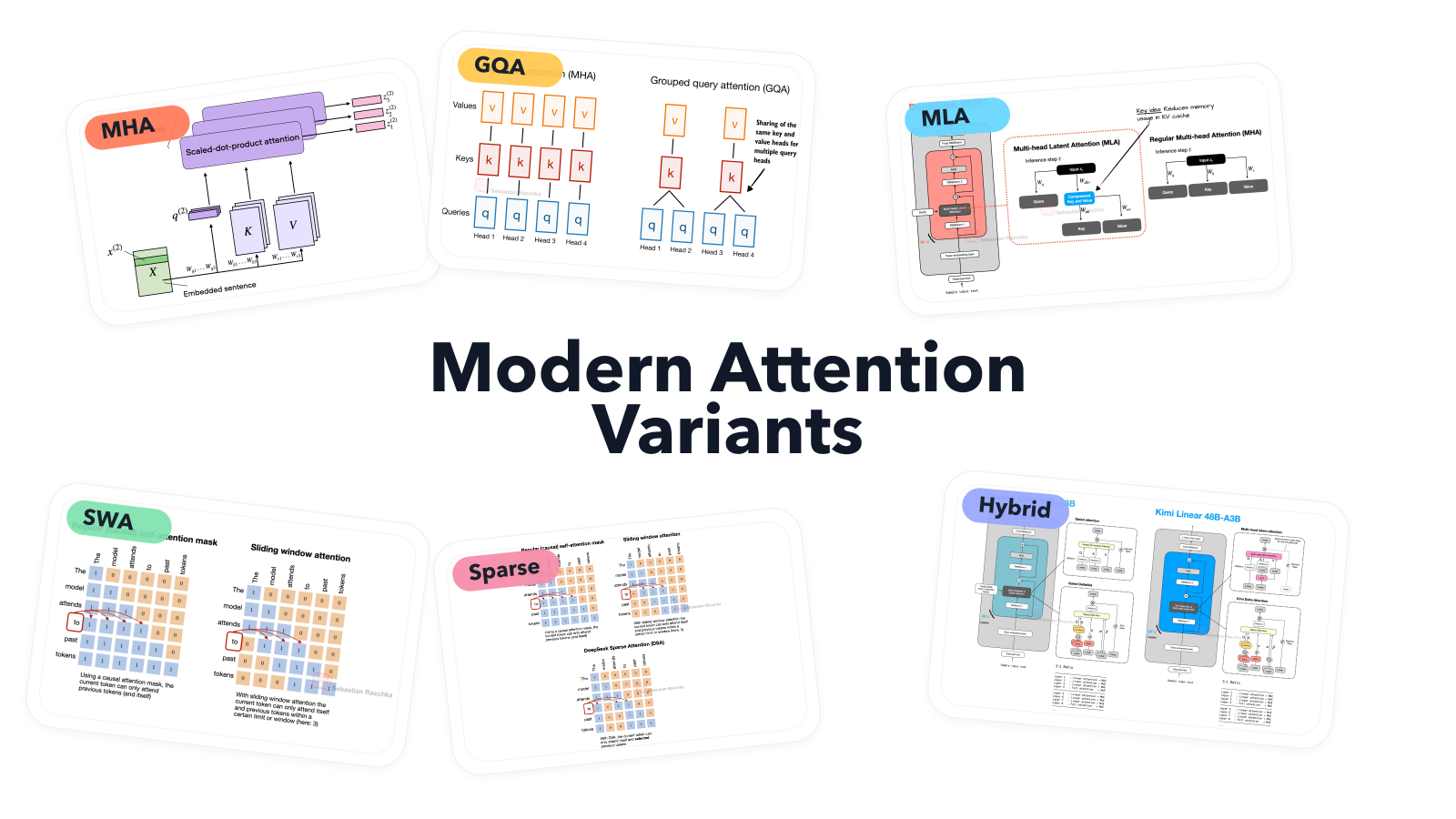

A Visual Guide to Attention Variants in Modern LLMs

A focused guide to MHA, GQA, MLA, sparse attention, and hybrid attention.

A Visual Guide to Attention Variants in Modern LLMs

A focused guide to MHA, GQA, MLA, sparse attention, and hybrid attention.

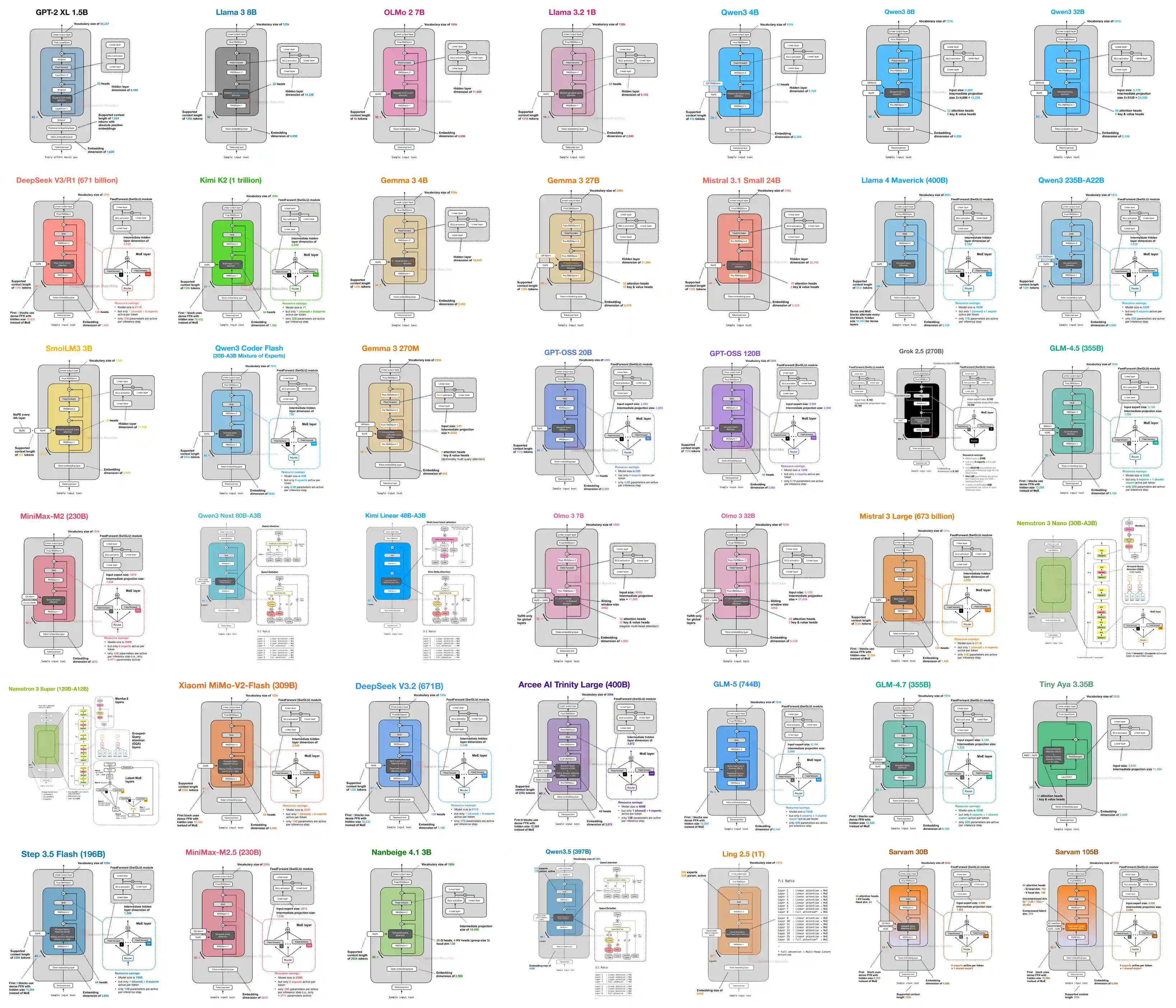

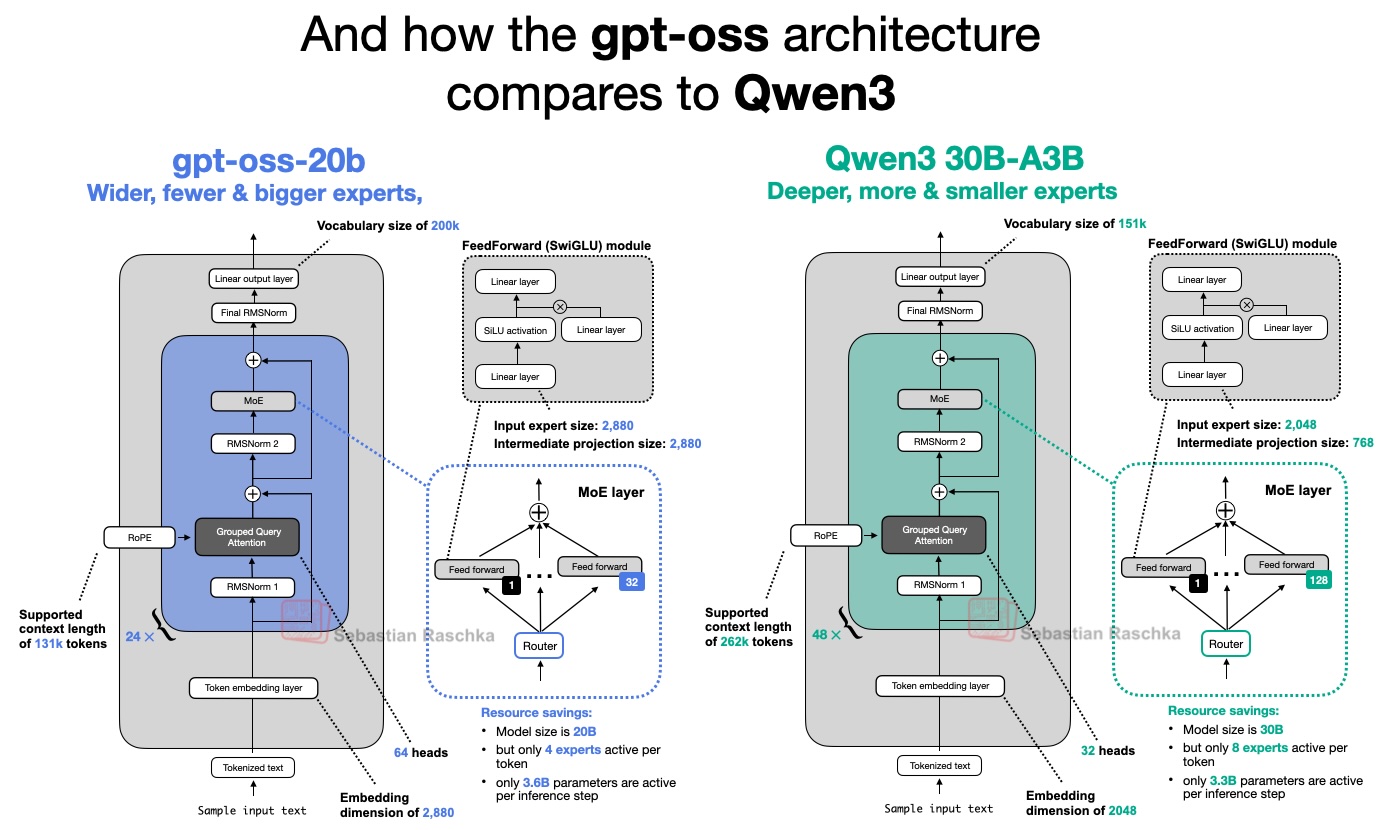

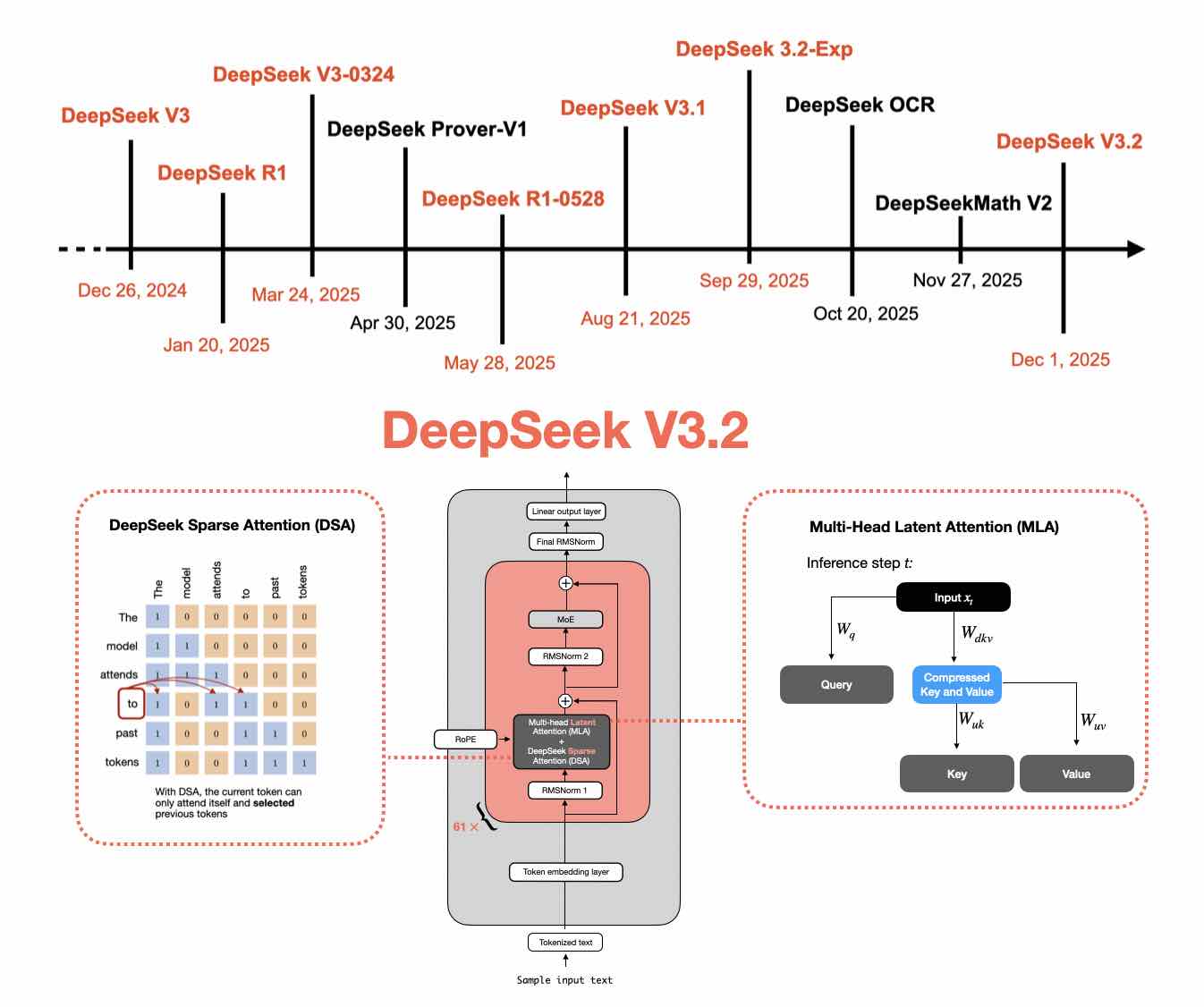

LLM Architecture Gallery

A visual reference collection of architecture figures and compact fact sheets.

LLM Architecture Gallery

A visual reference collection of architecture figures and compact fact sheets.

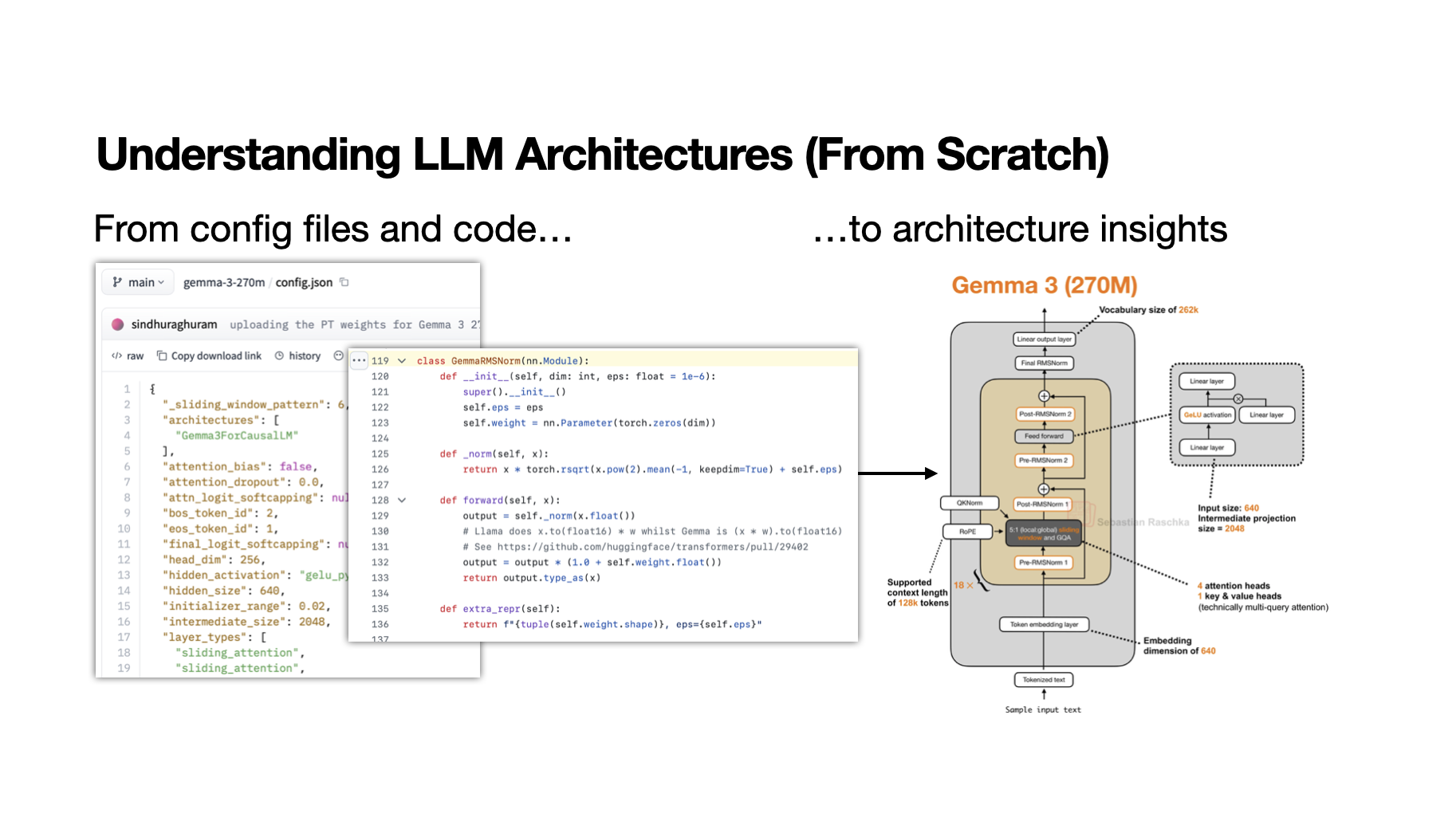

My Workflow for Understanding LLM Architectures

How to inspect open-weight model configs and implementation details.

My Workflow for Understanding LLM Architectures

How to inspect open-weight model configs and implementation details.

From GPT-2 to gpt-oss

A bridge from classic GPT-style designs to newer open-weight releases.

From GPT-2 to gpt-oss

A bridge from classic GPT-style designs to newer open-weight releases.

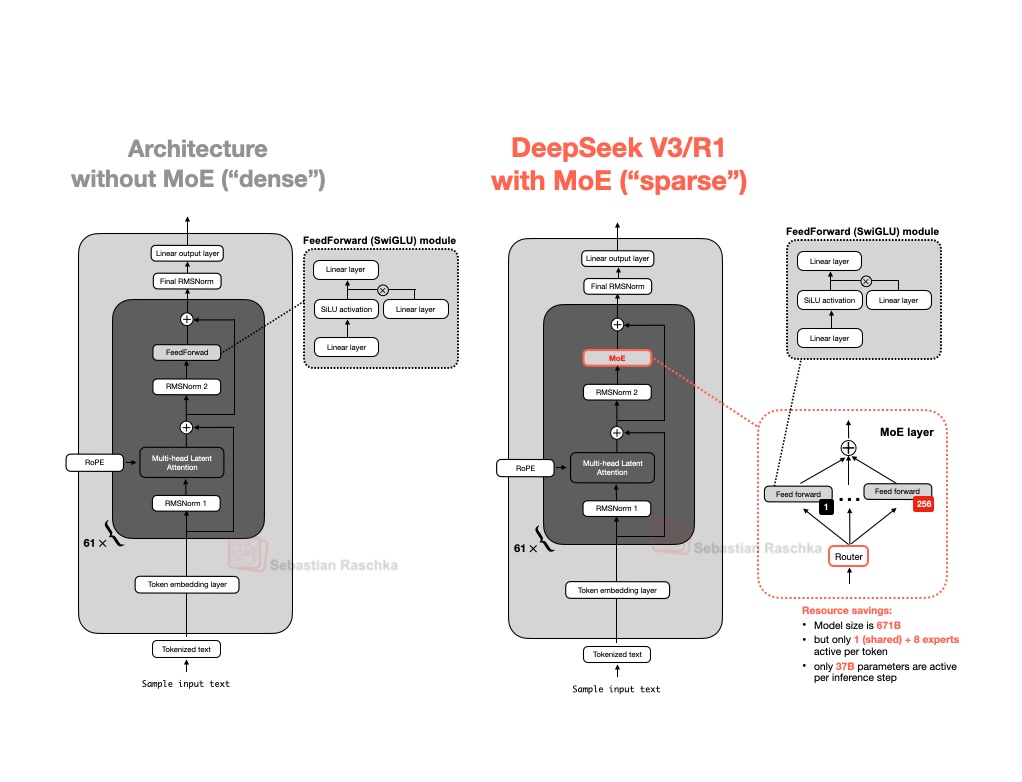

From DeepSeek V3 to V3.2

A technical look at DeepSeek architecture, sparse attention, and RL updates.

From DeepSeek V3 to V3.2

A technical look at DeepSeek architecture, sparse attention, and RL updates.

These are the most useful articles if your preferred way to understand a method is to implement it and inspect the moving parts.

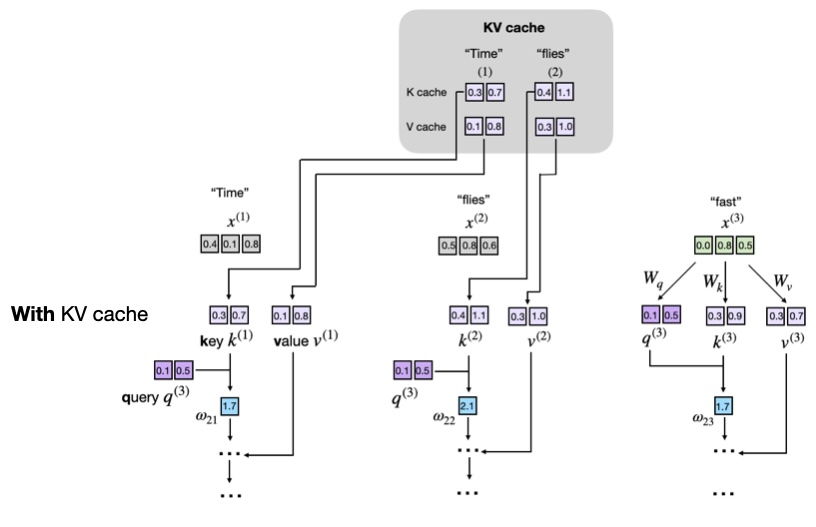

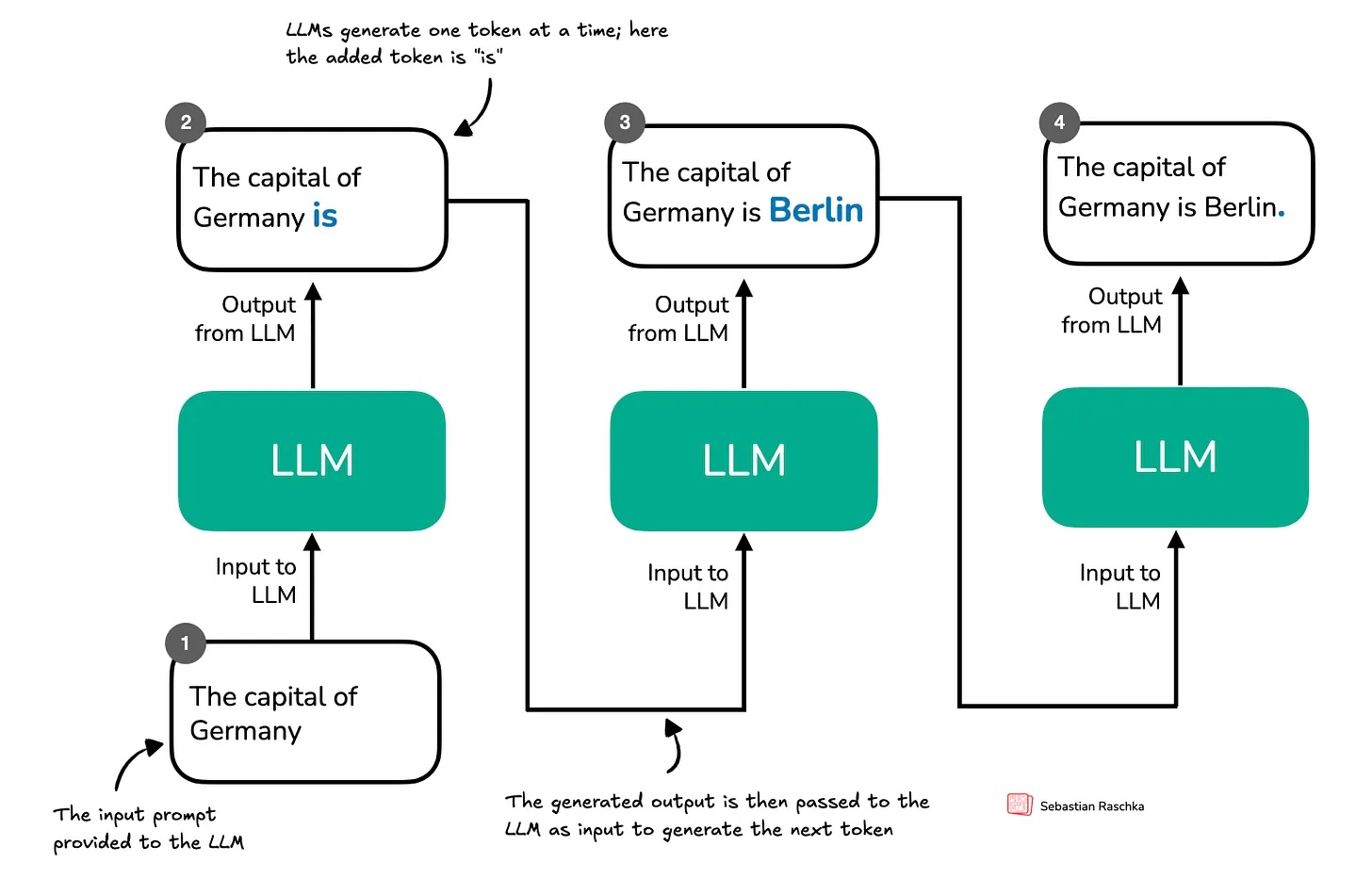

Understanding and Coding the KV Cache in LLMs from Scratch

A practical explanation of one of the key mechanisms behind efficient inference.

Understanding and Coding the KV Cache in LLMs from Scratch

A practical explanation of one of the key mechanisms behind efficient inference.

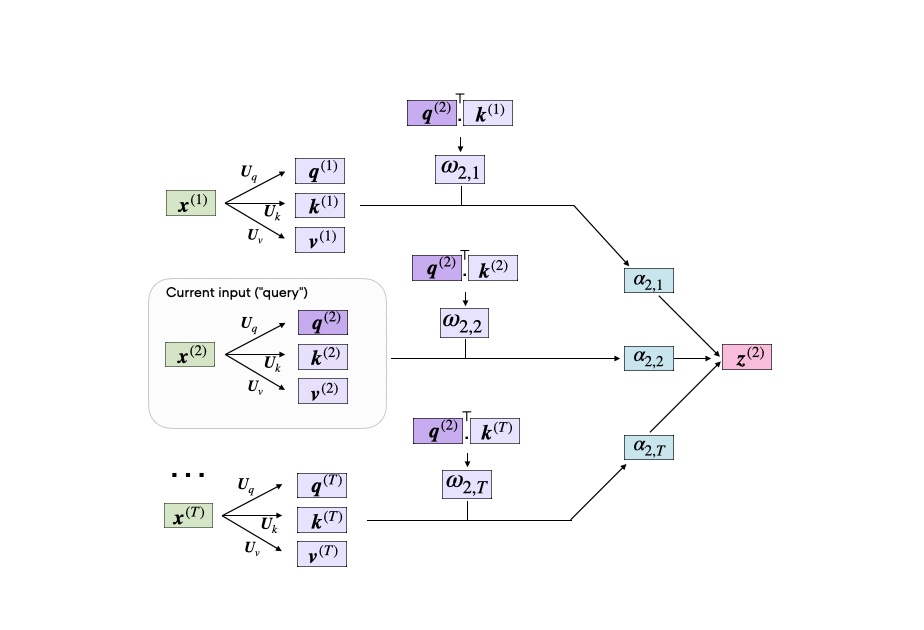

Self-Attention from Scratch

A step-by-step implementation of the core mechanism behind transformer LLMs.

Self-Attention from Scratch

A step-by-step implementation of the core mechanism behind transformer LLMs.

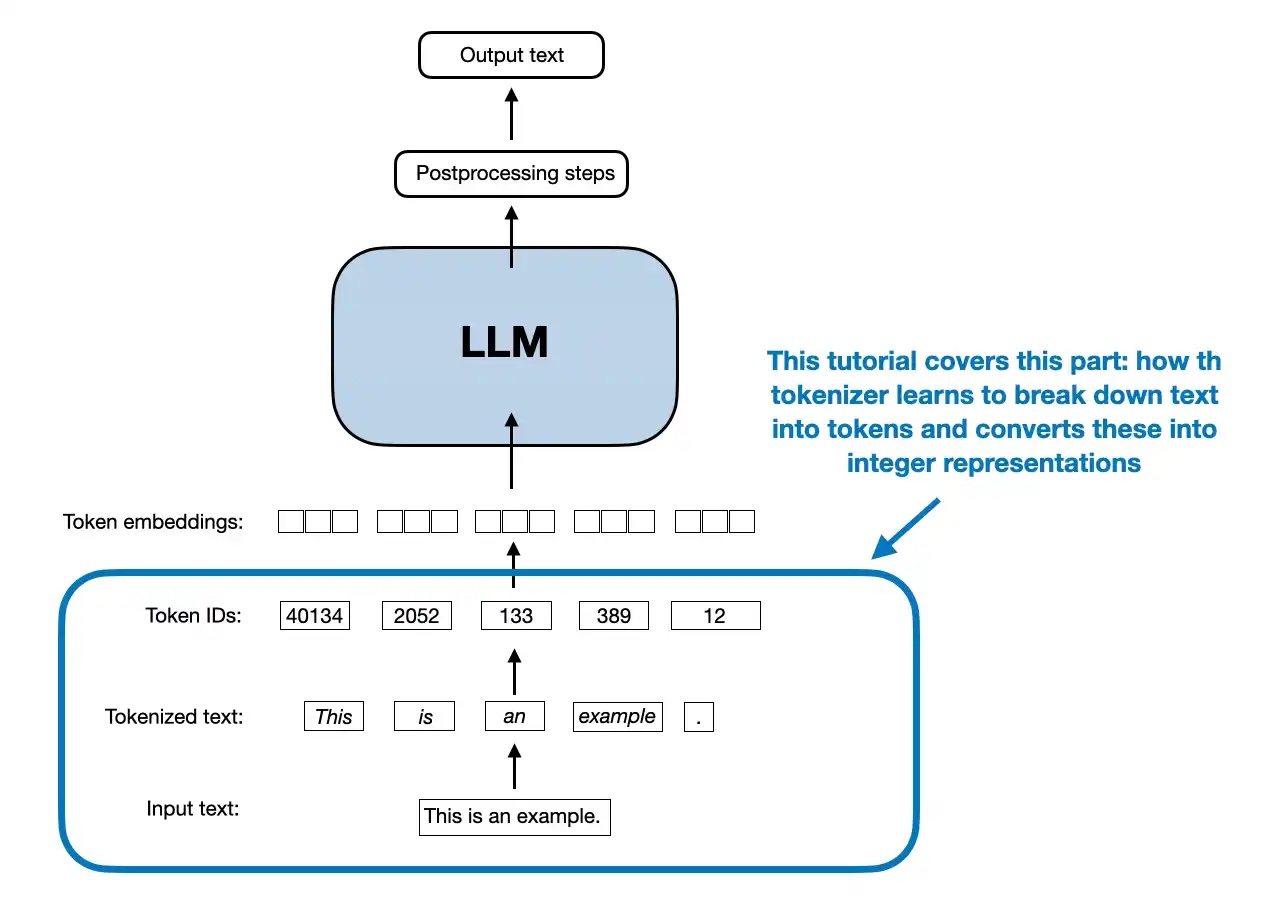

Byte Pair Encoding Tokenizer from Scratch

A standalone implementation of the tokenization algorithm used by many LLMs.

Byte Pair Encoding Tokenizer from Scratch

A standalone implementation of the tokenization algorithm used by many LLMs.

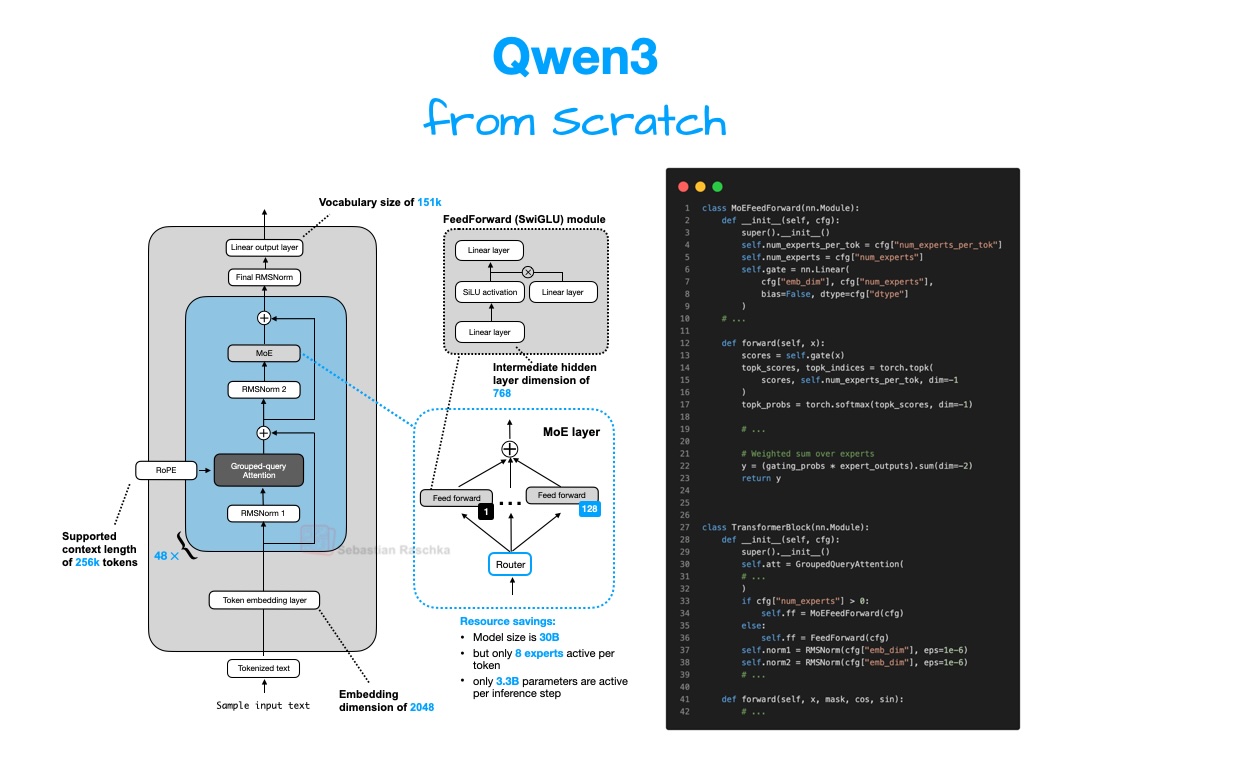

Understanding and Implementing Qwen3 from Scratch

A detailed implementation-focused look at a modern open-weight LLM family.

Understanding and Implementing Qwen3 from Scratch

A detailed implementation-focused look at a modern open-weight LLM family.

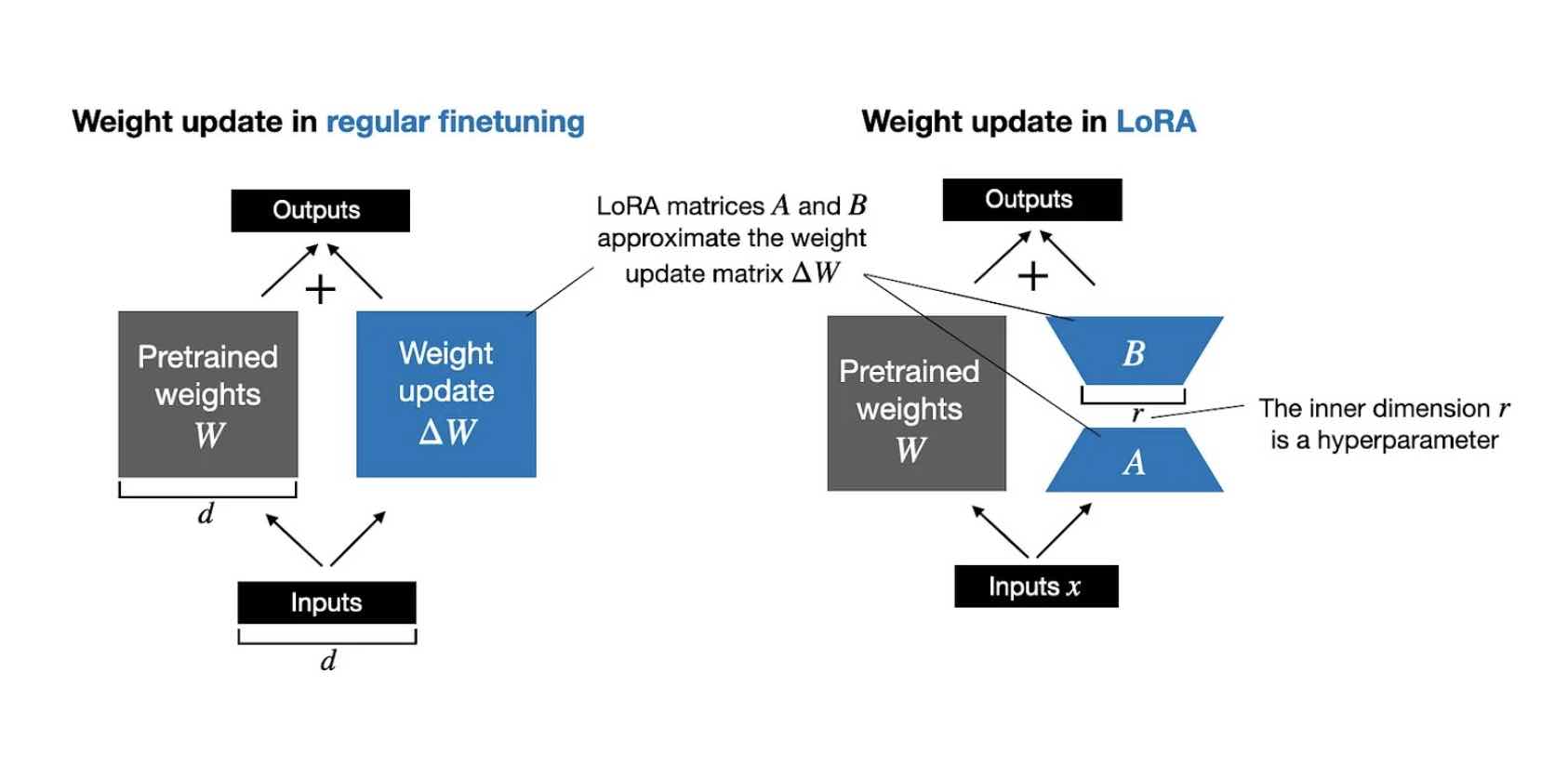

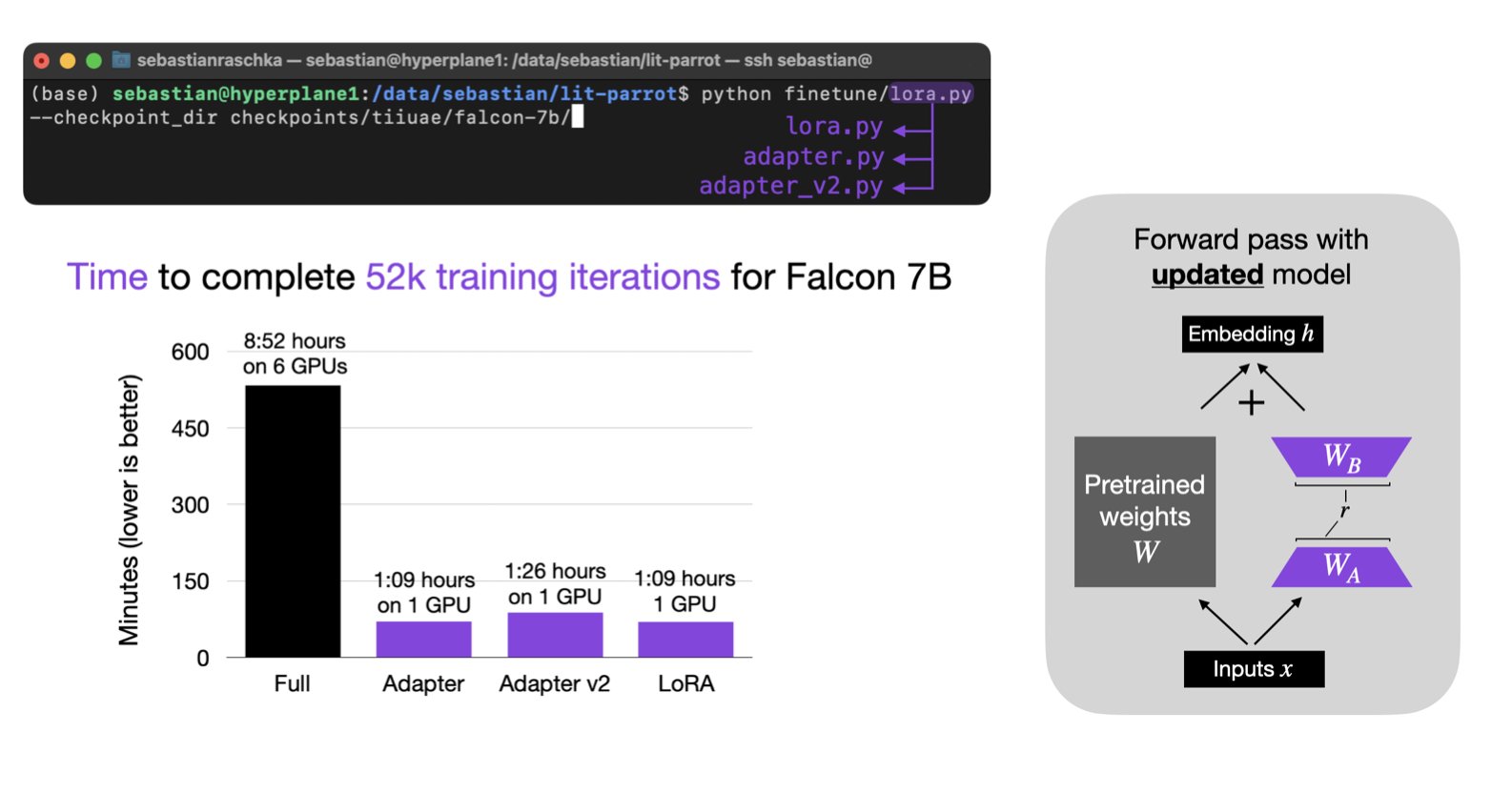

LoRA and DoRA from Scratch

A code-oriented explanation of parameter-efficient adaptation methods.

LoRA and DoRA from Scratch

A code-oriented explanation of parameter-efficient adaptation methods.

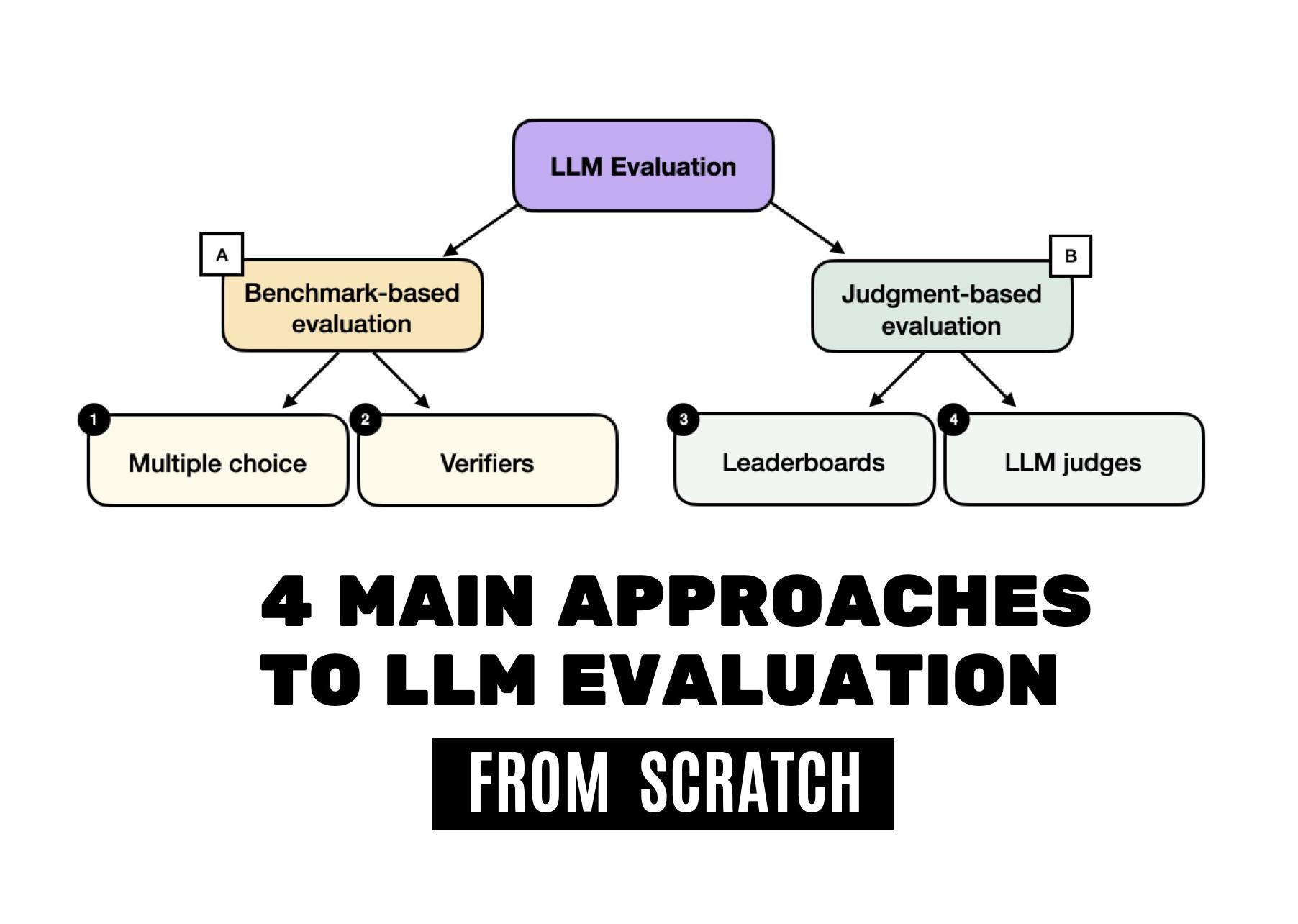

The 4 Main Approaches to LLM Evaluation from Scratch

Benchmarks, verifiers, leaderboards, and LLM judges with concrete examples.

The 4 Main Approaches to LLM Evaluation from Scratch

Benchmarks, verifiers, leaderboards, and LLM judges with concrete examples.

Use this path for reinforcement learning for LLM training, inference scaling, distillation, and other advanced reasoning-model methods.

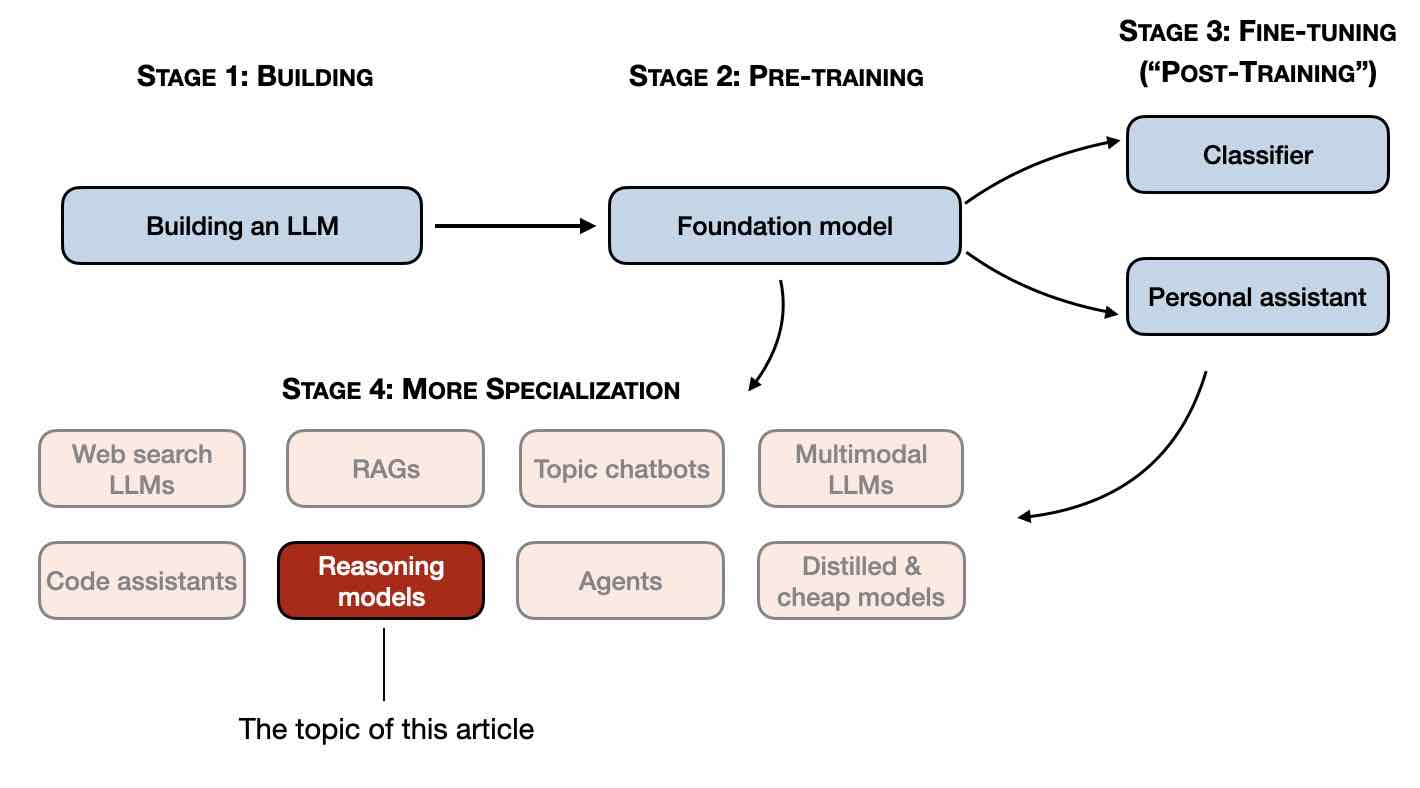

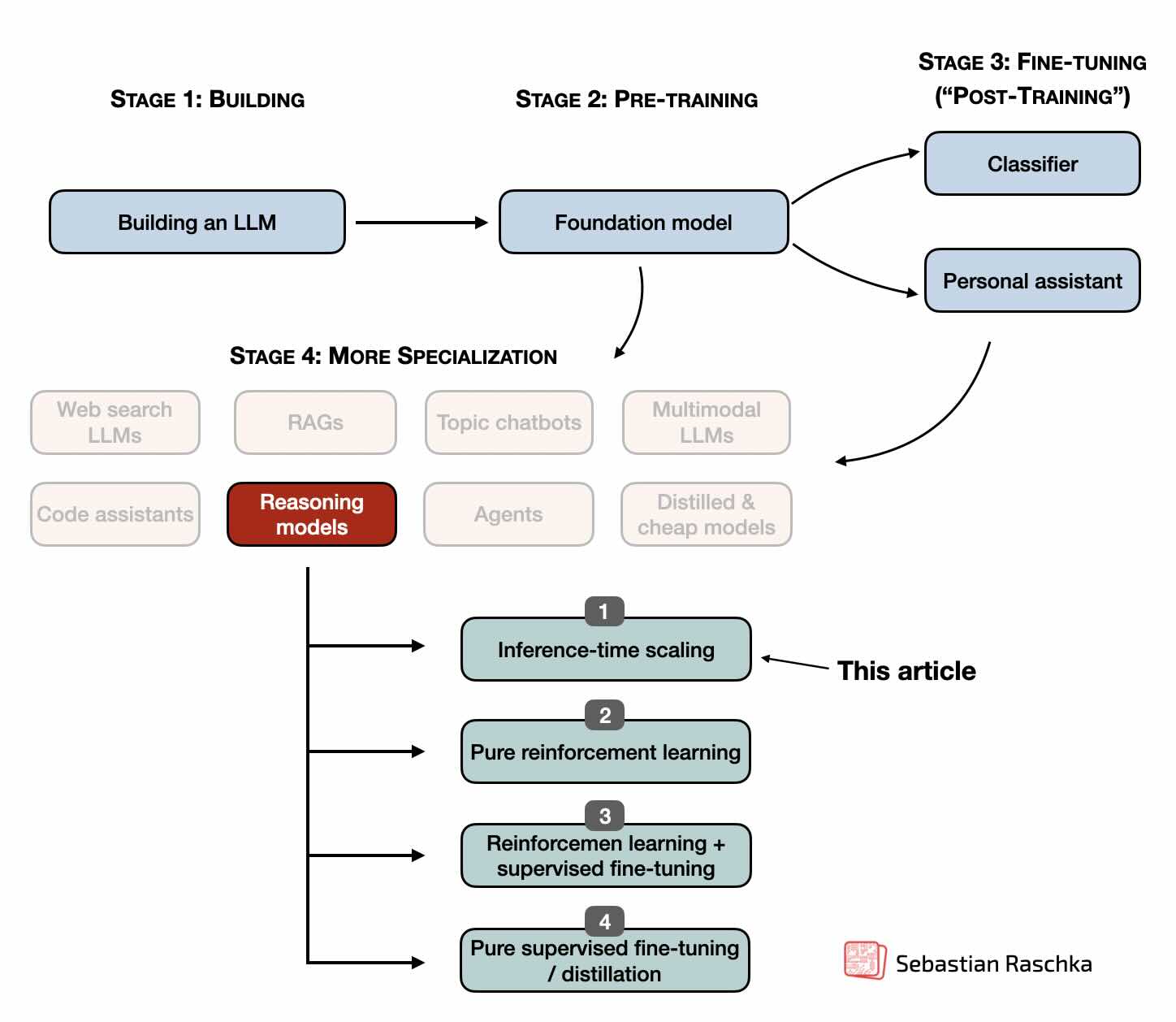

Understanding Reasoning LLMs

The high-level map of reasoning model strategies and where they fit.

Understanding Reasoning LLMs

The high-level map of reasoning model strategies and where they fit.

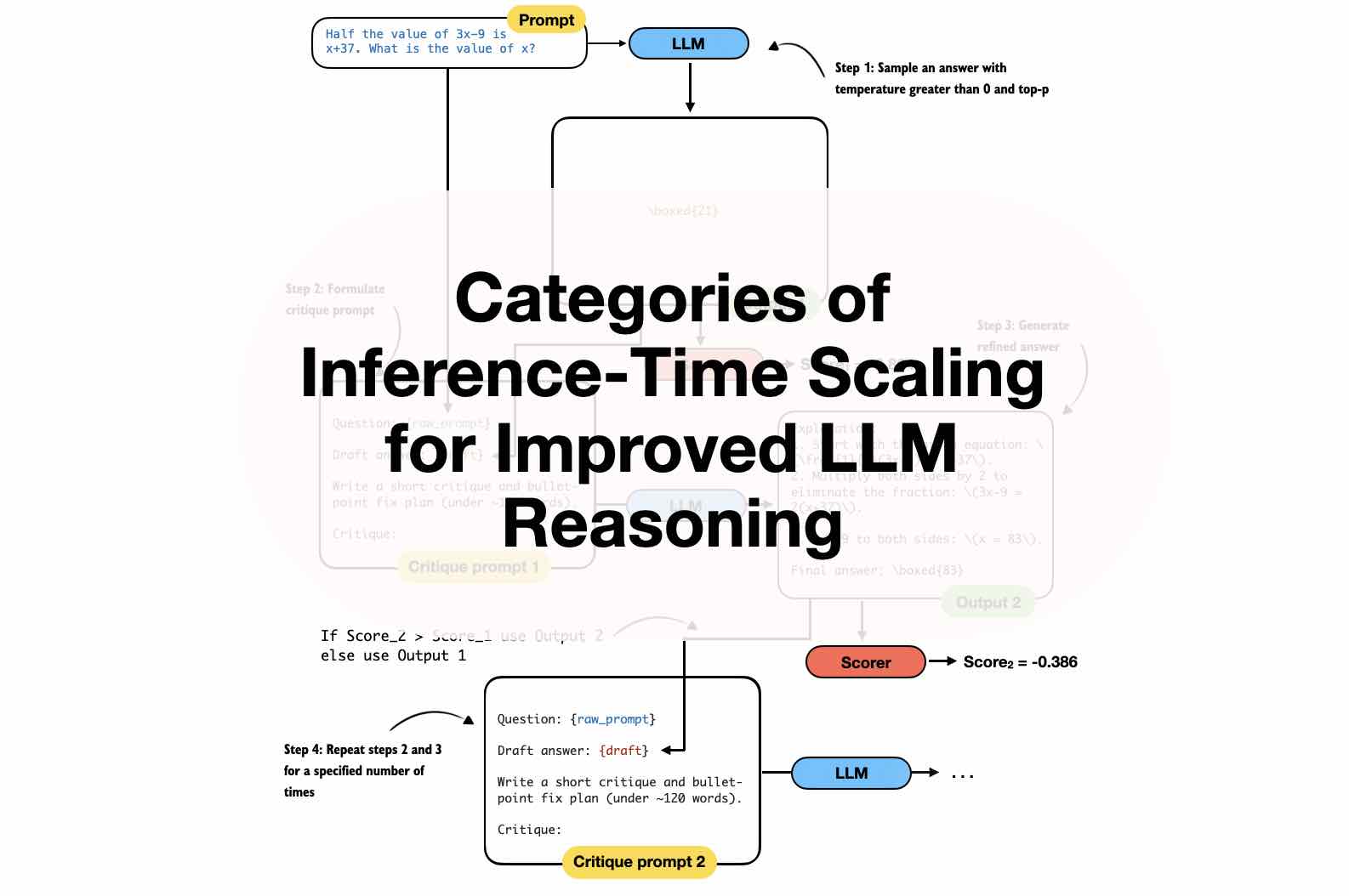

Categories of Inference-Time Scaling

A guide to using more test-time compute to improve LLM reasoning.

Categories of Inference-Time Scaling

A guide to using more test-time compute to improve LLM reasoning.

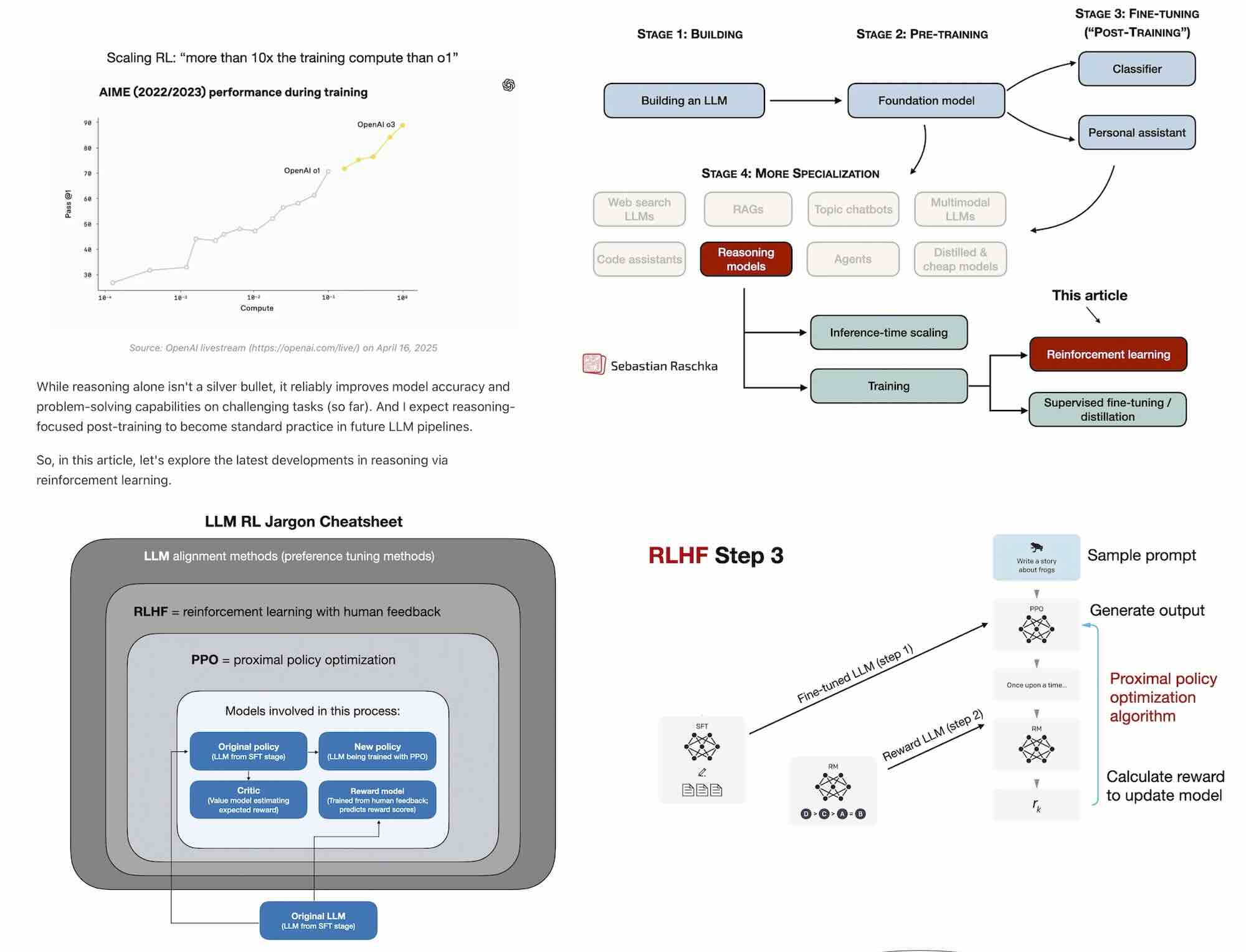

The State of Reinforcement Learning for LLM Reasoning

GRPO, reinforcement learning, and post-training ideas for reasoning models.

The State of Reinforcement Learning for LLM Reasoning

GRPO, reinforcement learning, and post-training ideas for reasoning models.

Inference-Time Compute Scaling Methods

A deeper look at inference-time compute methods for reasoning-optimized LLMs.

Inference-Time Compute Scaling Methods

A deeper look at inference-time compute methods for reasoning-optimized LLMs.

First Look at Reasoning From Scratch

A bridge from the conceptual articles into the code-first reasoning book.

First Look at Reasoning From Scratch

A bridge from the conceptual articles into the code-first reasoning book.

These articles and tutorials are useful when you want concrete PyTorch practice, training efficiency, and implementation details.

PyTorch in One Hour

A compact tutorial for getting productive with tensors, training loops, and GPUs.

PyTorch in One Hour

A compact tutorial for getting productive with tensors, training loops, and GPUs.

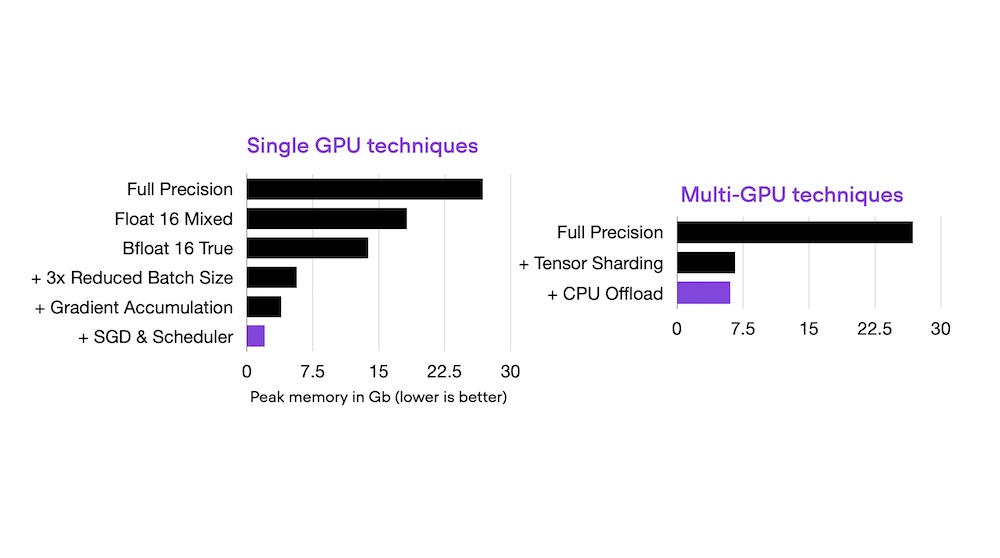

Optimizing Memory Usage in PyTorch

Practical memory-saving methods for LLMs and vision transformers.

Optimizing Memory Usage in PyTorch

Practical memory-saving methods for LLMs and vision transformers.

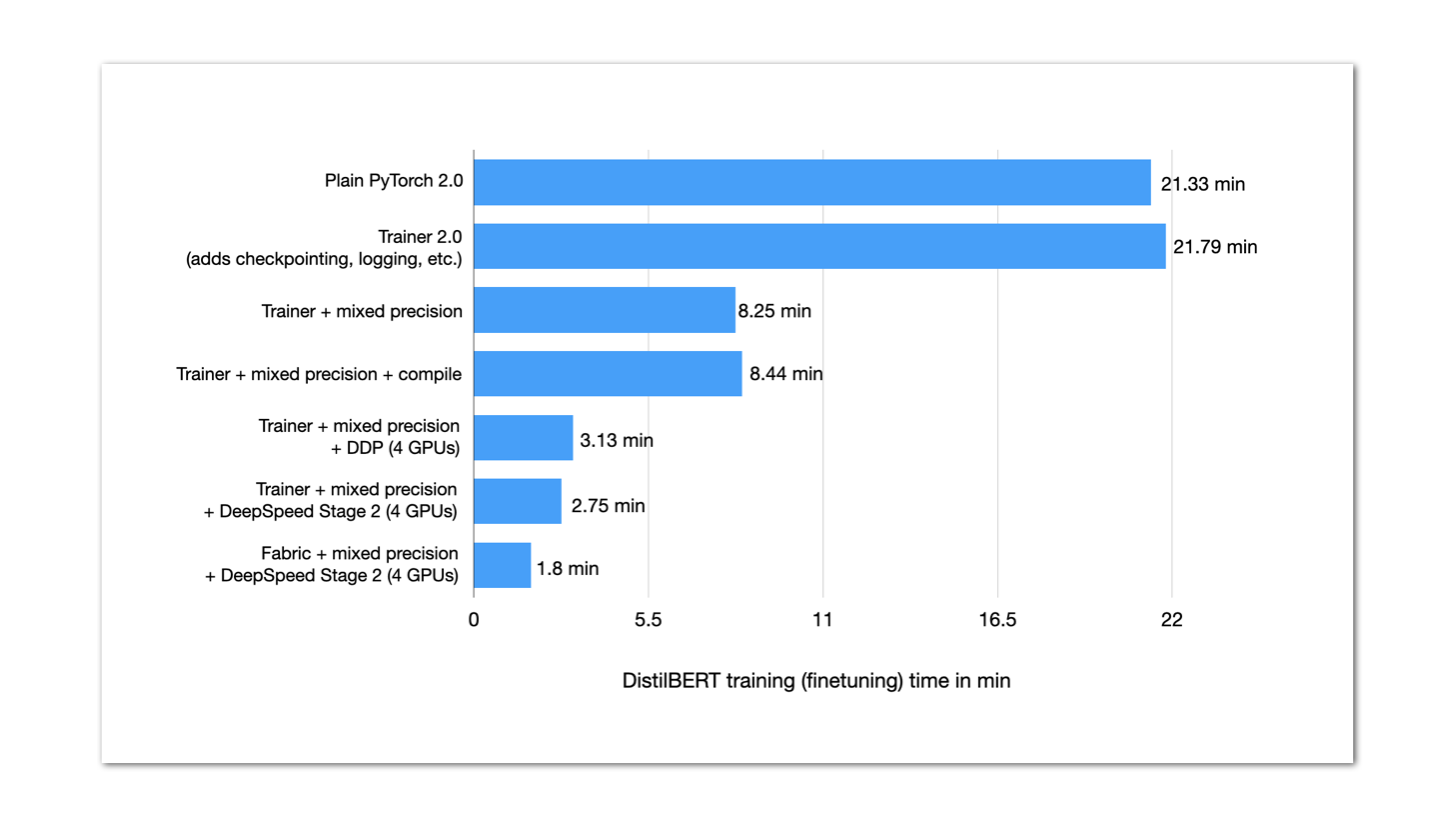

Make Your PyTorch Models Train Faster

A practical tour of performance improvements without changing model accuracy.

Make Your PyTorch Models Train Faster

A practical tour of performance improvements without changing model accuracy.

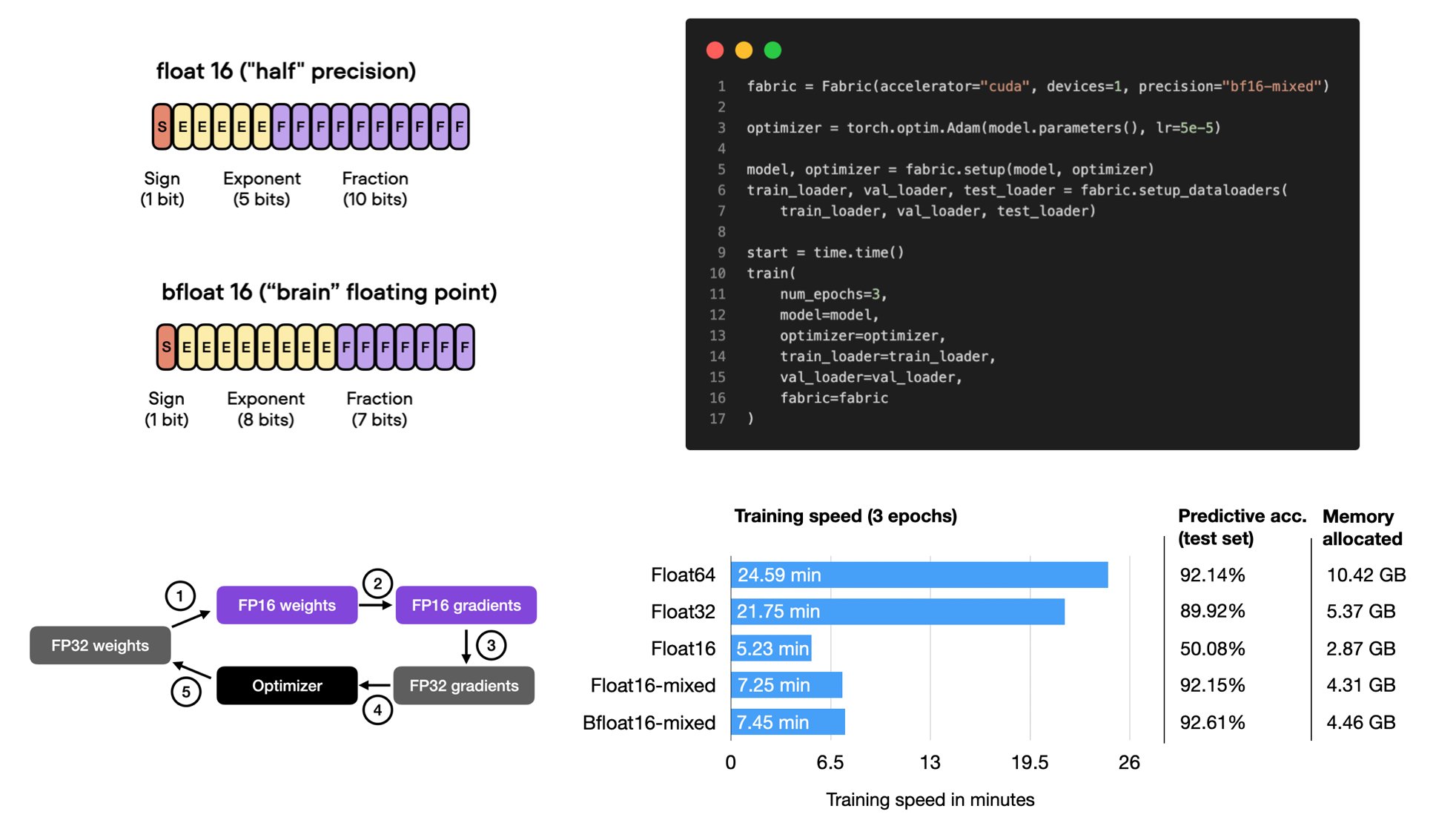

Mixed-Precision Techniques for LLMs

A code-backed explanation of float16, bfloat16, and training speedups.

Mixed-Precision Techniques for LLMs

A code-backed explanation of float16, bfloat16, and training speedups.

Finetuning Falcon LLMs with LoRA and Adapters

A practical finetuning comparison for parameter-efficient methods.

Finetuning Falcon LLMs with LoRA and Adapters

A practical finetuning comparison for parameter-efficient methods.

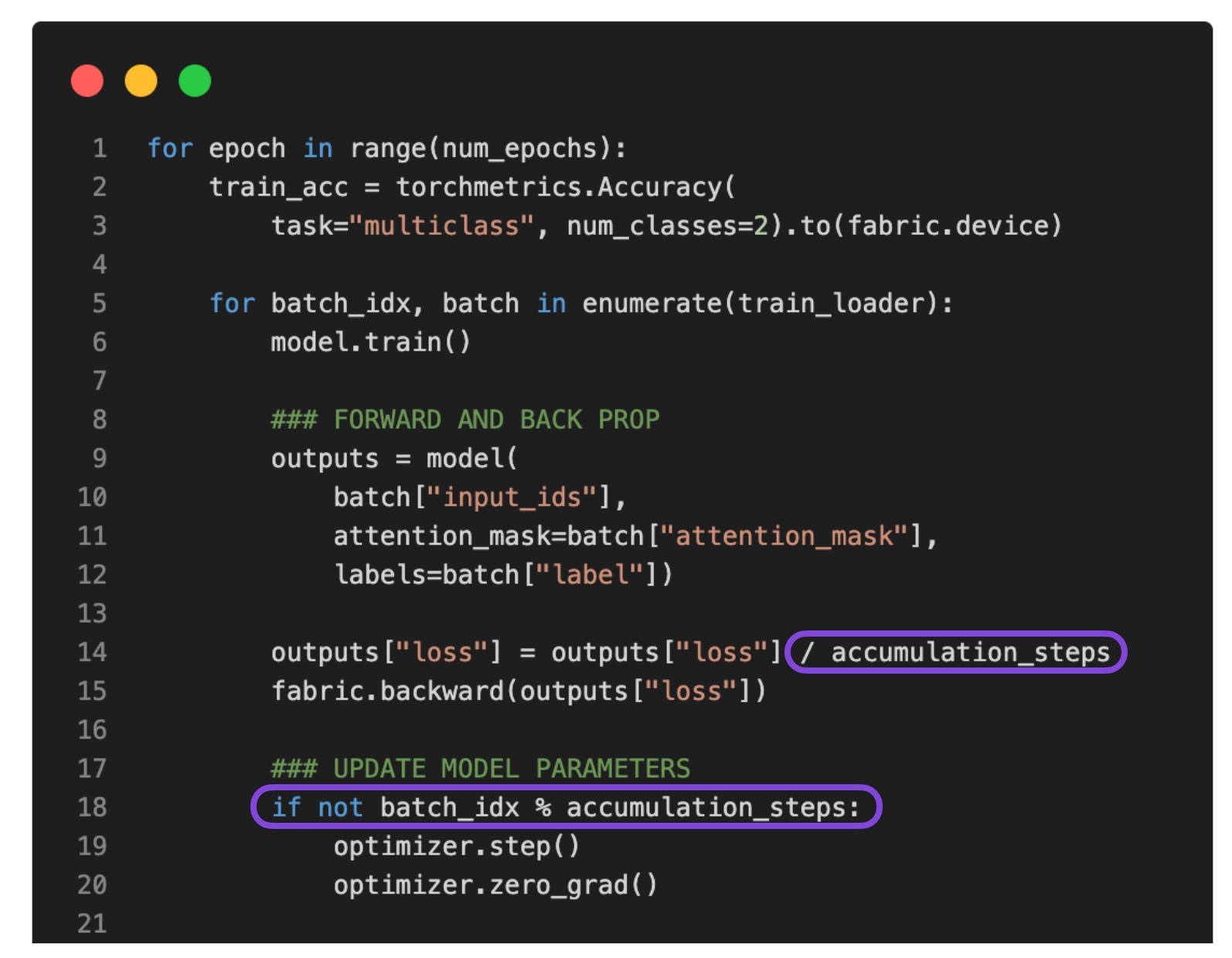

Single-GPU LLM Finetuning with Gradient Accumulation

A practical workaround for larger effective batch sizes when GPU memory is tight.

Single-GPU LLM Finetuning with Gradient Accumulation

A practical workaround for larger effective batch sizes when GPU memory is tight.

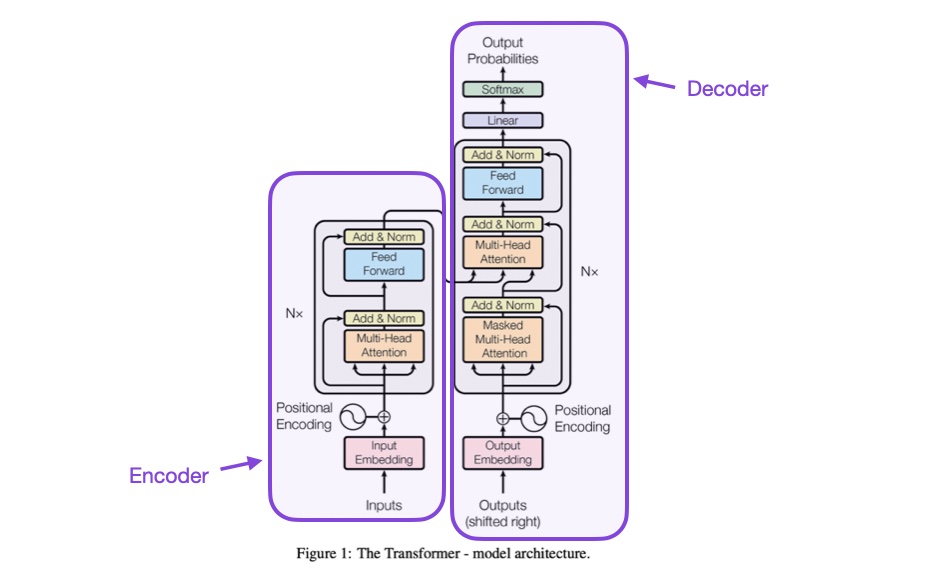

Start here if you want broader context before jumping into longer technical walkthroughs or code-heavy implementations.

Understanding Large Language Models: A Reading List

A guided set of papers and resources for building a first mental model of LLMs.

Understanding Large Language Models: A Reading List

A guided set of papers and resources for building a first mental model of LLMs.

Developing an LLM: Building, Training, Finetuning

A broad overview of the LLM development lifecycle.

Developing an LLM: Building, Training, Finetuning

A broad overview of the LLM development lifecycle.

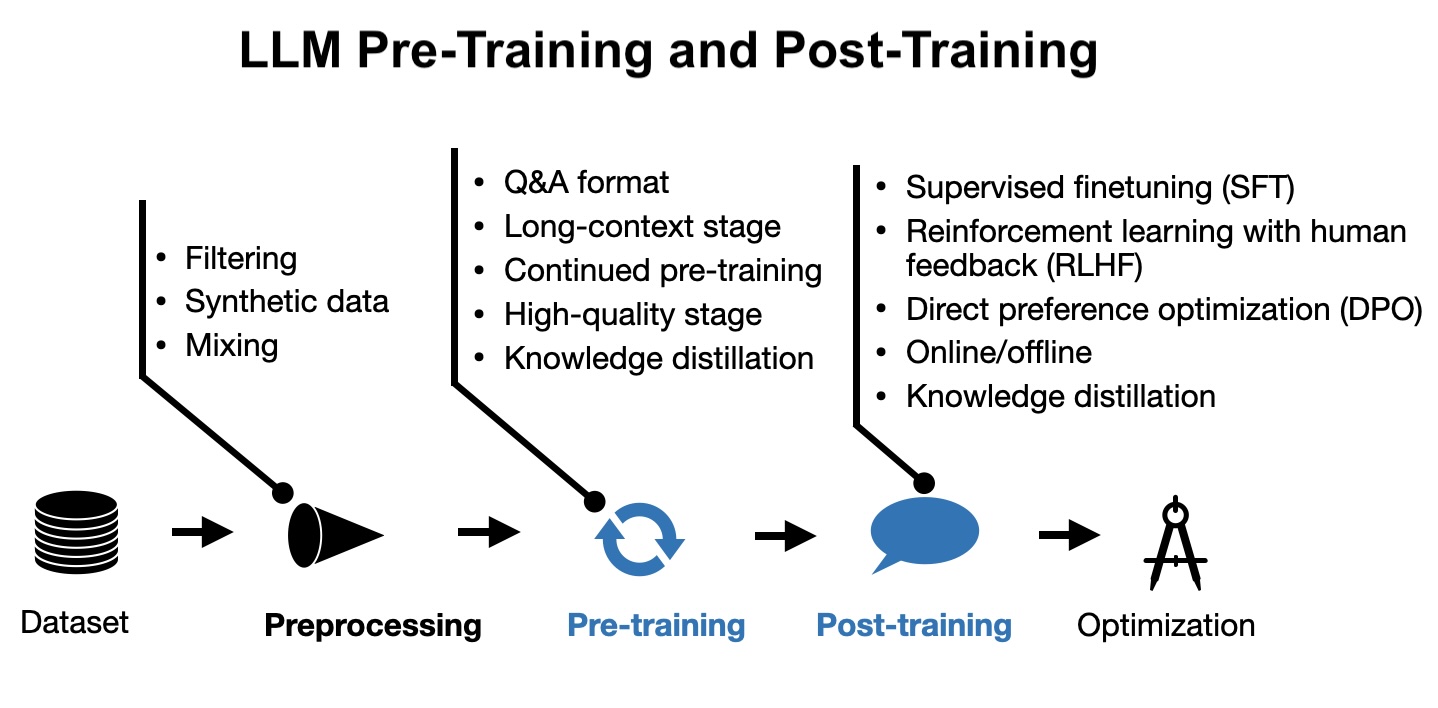

New LLM Pre-training and Post-training Paradigms

A readable tour of how modern LLM training has changed.

New LLM Pre-training and Post-training Paradigms

A readable tour of how modern LLM training has changed.

Keeping Up With AI Research and News

A practical workflow for navigating the flood of AI papers and updates.

Keeping Up With AI Research and News

A practical workflow for navigating the flood of AI papers and updates.

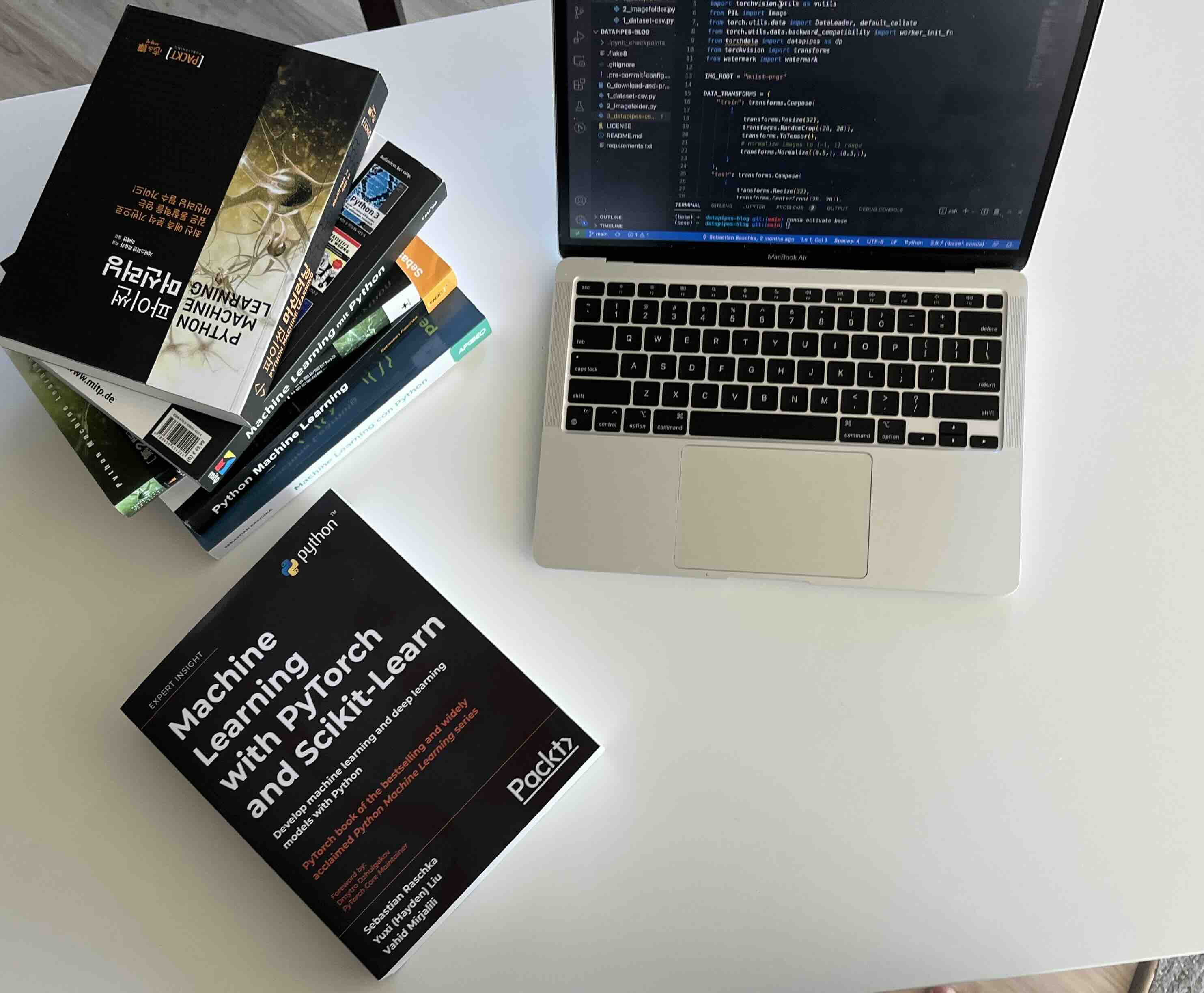

Getting the Most Out of a Technical Book

Study advice for turning technical reading into working knowledge.

Getting the Most Out of a Technical Book

Study advice for turning technical reading into working knowledge.

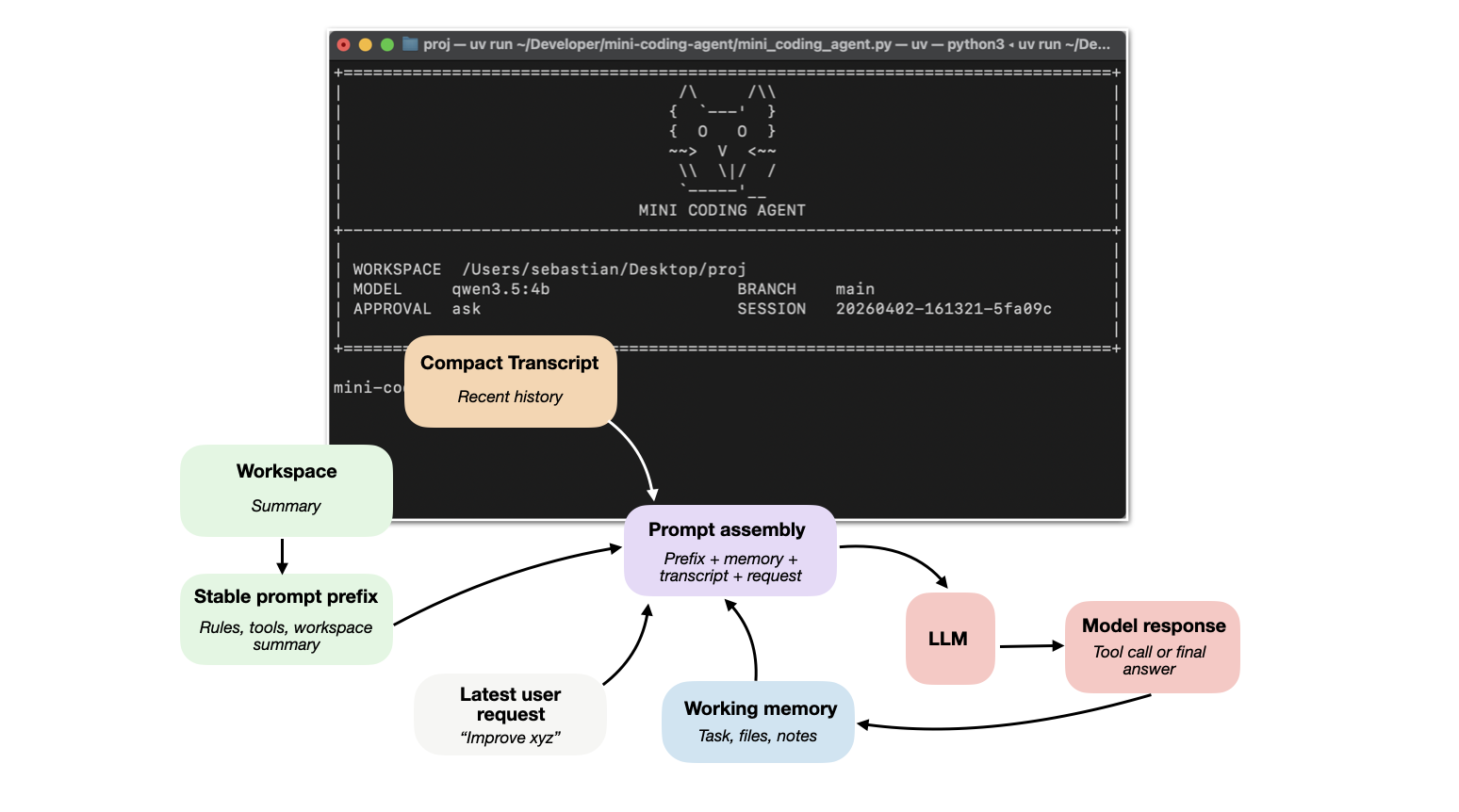

Components of a Coding Agent

A practical entry point into tools, memory, repo context, and coding agents.

Components of a Coding Agent

A practical entry point into tools, memory, repo context, and coding agents.